I’ve been very concerned about the fate of my SharePoint Online (SPO) public sites as of late. It’s March of 2017, and I know that Microsoft intends to pull the plug on all of those SPO public sites in the not-so-distant future. I have three of them myself: one for my wife’s non-profit organization (for which I’m also the CTO), one for my LLC, and a final one for my musical labor of love.

I’ve been very concerned about the fate of my SharePoint Online (SPO) public sites as of late. It’s March of 2017, and I know that Microsoft intends to pull the plug on all of those SPO public sites in the not-so-distant future. I have three of them myself: one for my wife’s non-profit organization (for which I’m also the CTO), one for my LLC, and a final one for my musical labor of love.

A while back, I pleaded with Microsoft publicly to give us some help before they shut things down for the SPO public sites. Well, it would seem that we’ve been given some help in the form of an end-of-life reprieve.

I had heard about the possibility of Microsoft pushing the deadline for the “ya gotta move it” date for SPO public sites, but I hadn’t been looking all that closely to see if there was any movement on that front. Since this month is due to close out in the next few days, I decided I’d better actually take a look. So, I went into one of my tenants and found what I’d hoped to find:

Thank the Heavens!

If you’re like me and you haven’t been tracking things as closely as you might have liked, it turns out that you can spare your SharePoint Online public site a cruel and horrible death for roughly another year (i.e., until March 31 of 2018). The process for delaying your site’s demise is relatively straightforward and described in the body of this support article. If you want something a bit more visual, though, then the following walk-through might help you out.

Sign in to your Office 365 tenant with a set of credentials that have the necessary rights to make changes to SharePoint Online settings. Go ahead – click the link I just supplied.

Sign in to your Office 365 tenant with a set of credentials that have the necessary rights to make changes to SharePoint Online settings. Go ahead – click the link I just supplied.- Click on the waffle menu in the suite links bar near the top of the page. The waffle menu is opened by clicking those nine dots (arranged like a Rubik’s Cube). When you click the waffle menu button, you’ll get a menu with a bunch of tiles that looks something like the image above. You’re interested in the Admin button right now.

Click the Admin button, and you’ll be taken to your tenant’s Admin center as shown on the right. I’ve branded my Bitstream Foundry tenant, so chances are your admin center is going to look different than mine – perhaps with a different color scheme and logo. Note that if your organization hasn’t assigned a logo, you won’t see one in the suite links bar.

Click the Admin button, and you’ll be taken to your tenant’s Admin center as shown on the right. I’ve branded my Bitstream Foundry tenant, so chances are your admin center is going to look different than mine – perhaps with a different color scheme and logo. Note that if your organization hasn’t assigned a logo, you won’t see one in the suite links bar. Along the left-hand side of the Admin center will be a set of collapsed drop-downs that represent your various administrative functions and management pages/areas. You’ll want to click on the Admin centers option at the bottom of the list to expand it as shown on the right. When you do this, you should see SharePoint listed between your Skype for Business and OneDrive options.

Along the left-hand side of the Admin center will be a set of collapsed drop-downs that represent your various administrative functions and management pages/areas. You’ll want to click on the Admin centers option at the bottom of the list to expand it as shown on the right. When you do this, you should see SharePoint listed between your Skype for Business and OneDrive options. Click on the SharePoint option, and you’ll be taken to the SharePoint admin center for your tenant. You’ll see the list of site collections that exist within your tenant in the main window area, and a toolbar will appear above the main window area providing you with options to create a new site collection, buy storage, and quite a bit more. you’ll also see the list of SharePoint-specific admin areas/options appear along the left-hand side of the admin page as shown to the right.

Click on the SharePoint option, and you’ll be taken to the SharePoint admin center for your tenant. You’ll see the list of site collections that exist within your tenant in the main window area, and a toolbar will appear above the main window area providing you with options to create a new site collection, buy storage, and quite a bit more. you’ll also see the list of SharePoint-specific admin areas/options appear along the left-hand side of the admin page as shown to the right.- Locate the settings option in the left-hand column and click it. Once you click it, you’ll see a whole host of settings that you can review and change. It is in this list that you’ll find the Postpone deletion of SharePoint Online public websites option buttons that I showed a bit earlier.

- Click on the I’d like to keep my public website until March 31, 2018 option button to pull your SPO public site off of death row.

- Scroll to the bottom of the page and click the OK button along the right-hand side of the page. This will save your change.

That’s all there is to it!

Can’t You Just Give Me the Shortcut?

Sure! If you’re not into clicking through all of the admin screens and options I just walked through, you can simply point your browser at https://{tenantName}-admin.sharepoint.com/_layouts/15/online/TenantSettings.aspx to get to the page which is shown in Step #6 above. Note that you’ll need to replace the {tenantName} token in the URL above with the actual name of your tenant to make this work for you.

A Few Notes

This process buys you roughly another year to get your act together and move your SPO public site. You’ll then have until March 31 of 2018 to locate another home for your site and/or its content.

If you don’t follow the process I’ve outlined, Microsoft calls out the following dates:

- Beginning May 1, 2017, anonymous access for your SPO public site will be removed.

- On September 1, 2017, Microsoft will be deleting SPO public sites which haven’t been protected via the opt-in I described above. If you haven’t saved your SPO public site content by 9/1, you’re going to lose it!

Hopefully you’ll rest a bit easier (as I have been doing) after opting-in to protect your public site(s). I intend to get my sites moved before next March, and I’ll likely detail that process in a future post. But for now … deep breaths!

A couple of weeks ago, I was down in Nashville, Tennessee speaking at

A couple of weeks ago, I was down in Nashville, Tennessee speaking at

It’s currently late in May of 2016. The plug could get pulled on SharePoint Online public sites as soon as March 2017. The clock is ticking, time is running out, and I don’t yet have a plan for transitioning to something else for the sites I cited above.

It’s currently late in May of 2016. The plug could get pulled on SharePoint Online public sites as soon as March 2017. The clock is ticking, time is running out, and I don’t yet have a plan for transitioning to something else for the sites I cited above. Sure,

Sure,  On behalf of all of the non-profits, small-to-mid-sized companies, user groups, and others stranded on SOPSI Island: please build us a reasonable bridge or provide us with some additional hand-holding (or services) to help us safely leave the island.

On behalf of all of the non-profits, small-to-mid-sized companies, user groups, and others stranded on SOPSI Island: please build us a reasonable bridge or provide us with some additional hand-holding (or services) to help us safely leave the island.

I used to write posts to sum-up the various conferences at which I’ve spoken. That was feasible when I was only speaking at a conference or event here or there, but writing about every event is somewhat time-consuming nowadays. And besides, most of the posts would look about the same: “great event,” “lots of fun,” “awesome attendees,” etc.

I used to write posts to sum-up the various conferences at which I’ve spoken. That was feasible when I was only speaking at a conference or event here or there, but writing about every event is somewhat time-consuming nowadays. And besides, most of the posts would look about the same: “great event,” “lots of fun,” “awesome attendees,” etc.

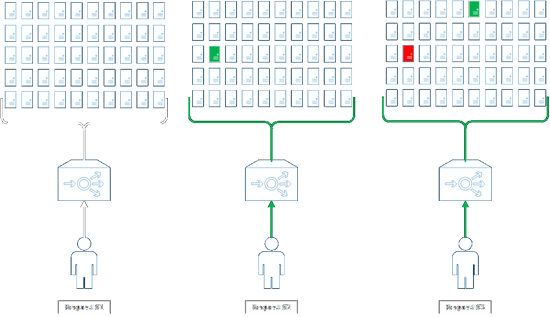

I’m a big fan of leveraging caching to improve performance. If you look over my blog, you’ll find quite a few articles that cover things like implementing BLOB caching within SharePoint, working with the Object Cache, extending your own code with caching options, and more. And most of those posts were written in a time when the on-premises SharePoint farm was king.

I’m a big fan of leveraging caching to improve performance. If you look over my blog, you’ll find quite a few articles that cover things like implementing BLOB caching within SharePoint, working with the Object Cache, extending your own code with caching options, and more. And most of those posts were written in a time when the on-premises SharePoint farm was king.

Update (Evening 7/23/2015)

Update (Evening 7/23/2015)