I’m a big fan of leveraging caching to improve performance. If you look over my blog, you’ll find quite a few articles that cover things like implementing BLOB caching within SharePoint, working with the Object Cache, extending your own code with caching options, and more. And most of those posts were written in a time when the on-premises SharePoint farm was king.

I’m a big fan of leveraging caching to improve performance. If you look over my blog, you’ll find quite a few articles that cover things like implementing BLOB caching within SharePoint, working with the Object Cache, extending your own code with caching options, and more. And most of those posts were written in a time when the on-premises SharePoint farm was king.

The “caching picture” began shifting when we started moving to the cloud. SharePoint Online and hosted SharePoint services aren’t the same as SharePoint on-premises, and the things we rely upon for performance improvements on-premises don’t necessarily have our backs when we move out to the cloud.

Yeah, I’m talking about caching here. And as much as it breaks my heart to say it, caching – you ain’t no friend of mine out in SharePoint Online.

Why the heartbreak?

To understand why a couple of SharePoint’s traditional caching mechanisms aren’t doing you any favors in a multi-tenant service like SharePoint Online (with or without Office 365), it helps to first understand how memory-based caching features – like SharePoint’s Object Cache – work in an on-premises environment.

On-Premises

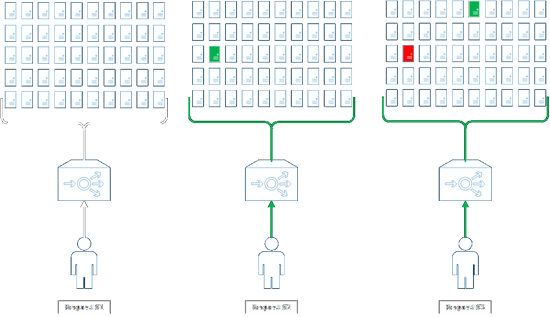

The typical on-premises environment has a small number of web front-ends (WFEs) serving content to users, and the number of site collections being served-up is relatively limited. For purposes of illustration, consider the following series of user requests to an environment possessing two WFEs behind a load balancer:

Assuming the WFEs have just been rebooted (or the application pools backing the web applications for target site collection have just been recycled) – a worst-case scenario – the user in Request #1 is going to hit a server (either #1 or #2) that does not have cached content in its Object Cache. For this example, we’ll say that the user is directed to WFE #1. Responses from WFE #1 will be slower as SharePoint works to generate the content for the user and populate its Object Cache. The WFE will then return the user’s response, but as a result of the request, its Object Cache will contain site collection-specific content such as navigational sitemaps, Content Query Web Part (CQWP) query results, common site property values, any publishing page layouts referenced by the request, and more.

The next time the farm receives a request for the same site collection (Request #2), there’s a 50/50 shot that the user will be directed to a WFE that has cached content (WFE #1, shown in green) or doesn’t yet have any cached content (WFE #2). If the user is directed to WFE #1, bingo – a better experience should result. Let’s say the user gets unlucky, though, and hits WFE #2. The same process as described earlier (for WFE #1) ensues, resulting in a slower response to the user but a populated Object Cache on WFE #2.

By the time we get to Request #3, both WFEs have at least some cached content for the site collection being visited and should thus return responses more quickly. Assuming memory pressure remains low, these WFEs will continue to serve cached content for subsequent requests – until content expires out of the cache (forcing a re-fetch and fill) or gets forced out for some reason (again, memory pressure or perhaps an application pool recycle).

Another thing worth noting with on-premises WFEs is that many SharePoint administrators use warm-up scripts and services in their environments to make the initial requests that are described (in this example) by Request #1 and Request #2. So, it’s possible in these environments that end-users never have to start with a completely “cold” WFE and make the requests that come back more slowly (but ultimately populate the Object Caches on each server).

SharePoint Online

Let’s look at the same initial series of interactions again. Instead of considering the typical on-premises environment, though, let’s look at SharePoint Online.

The first thing you may have noticed in the diagrams above is that we’re no longer dealing with just two WFEs. In a SharePoint Online tenant, the actual number of WFEs is a variable number that depends on factors such as load. In this example, I set the number of WFEs to 50; in reality, it could be lower or (in all likelihood) higher.

Request #1 proceeds pretty much the same way as it did in the on-premises example. None of the WFEs have any cached content for the target site collection, so the WFE needs to do extra work to fetch everything needed for a response, return that information, and then place the results in its Object Cache.

In Request #2, one server has cached content – the one that’s highlighted in green. The remaining 49 servers don’t have cached content. So, in all likelihood (49 out of 50, or 98%), the next request for the same site collection is going to go to a different WFE.

By the time we get to Request #3, we see that another WFE has gone through the fetch-and-fill operation (again, highlighted in green). But, there’s something else worth noting that we didn’t see in the on-premises environment; specifically, the previous server which had been visited (in Request #1) is now red, not green. What does this mean? Well, in a multi-tenant environment like SharePoint Online, WFEs are serving-up hundreds and perhaps thousands of different site collections for each of the residents in the SharePoint environment. Object Caches do not have infinite memory, and so memory pressure is likely to be a much greater factor than it is on-premises – meaning that Object Caches are probably going to be ejecting content pretty frequently.

If the Object Cache on a WFE is forced to eject content relevant to the site collection a user is trying to access, then that WFE is going to have to do a re-fetch and re-fill just as if it had never cached content for the target site collection. The net effect, as you might expect, is longer response times and potentially sub-par performance.

The Take-Away

If there’s one point I’m trying to make in all of this, it’s this: you can’t assume that the way a SharePoint farm operates on-premises is going to translate to the way a SharePoint Online farm (or any other multi-tenant farm) is going to operate “out in the cloud.”

Is there anything you can do? Sure – there’s plenty. As I’ve tried to illustrate thus far, the first thing you can do is challenge any assumptions you might have about performance that are based on how on-premises environments operate. The example I’ve chosen here is the Object Cache and how it factors into the performance equation – again, in the typical on-premises environment. If you assume that the Object Cache might instead be working against you in a multi-tenant environment, then there are two particular areas where you should immediately turn your focus.

Navigation

By default, SharePoint site collections use structural navigation mechanisms. Structural navigation works like this: when SharePoint needs to render a navigational menu or link structure of some sort, it walks through the site collection noting the various sites and sub-sites that the site collection contains. That information gets built into a sitemap, and that sitemap is cached in the Object Cache for faster retrieval on subsequent requests that require it.

Without the Object Cache helping out, structural navigation becomes an increasingly less desirable choice as site hierarchies get larger and larger. Better options include alternatives like managed navigation or search-driven navigation; each option has its pros and cons, so be sure to read-up a bit before selecting an option.

Content Query Web Parts

When data needs to be rolled-up in SharePoint, particularly across lists or sites, savvy end-users turn to the CQWP. Since cross-list and cross-site queries are expensive operations, SharePoint will cache the results of such a query using – you guessed it – the Object Cache. Query results are then re-used from the Object Cache for a period of time to improve performance for subsequent requests. Eventually, the results expire and the query needs to be run again.

So, what are users to do when they can’t rely on the Object Cache? A common theme in SharePoint Online and other multi-tenant environments is to leverage Search whenever possible. This was called out in the previous section on Navigation, and it applies in this instance, as well.

An alternative to the CQWP is the Content Search Web Part (CSWP). The CSWP operates somewhat differently than the CQWP, so it’s not a one-to-one direct replacement … but it is very powerful and suitable in most cases. Since the CSWP pulls its query results directly from SharePoint’s search index, it’s exceptionally fast – making it just what the doctor ordered in a multi-tenant environment.

Quick note (2/1/2016): Thanks to Cory Williams for reminding me that the CSWP is currently only available to SharePoint Online Plan 2 and other “Plan 3” (e.g., E3, G3) users. Many enterprise customers fall into this bucket, but if you’re not one of them, then you won’t find the CSWP for use in your tenant :-(

There are plenty of good resources online for the CSWP, and I regularly speak on it myself; feel free to peruse resources I have compiled on the topic (and on other topics).

Wrapping-Up

In this article, I’ve tried to explain how on-premises and multi-tenant operations are different for just one area in particular; i.e., the Object Cache. In the future, I plan to cover some performance watch-outs and work-arounds for other areas … so stay tuned!

Hi, Sean. I just came across this article. Thanks for an interesting article and some good food for thought.

However, the only question I have for you is “are you sure this is how WFEs work in the cloud?”. This seems like a huge oversight or short-coming (the multiple-wfe issue for “first responders”) by Microsoft and would also seem like a large bit of overhead on their hardware. Have you personally spoken to anyone at Microsoft on the cloud architecture team and verified that SP Online does indeed work this way? I would be very surprised if they do not have a coherent system in place helping to mitigate the issue of the delays to first users hitting a wfe. As you say, on-prem and online are two different animals. I’m willing to bet it is in more ways than you are aware.

My gut tells me that at the very least their load balancers send traffic to just a couple of WFEs until the traffic increases to a certain level. It isn’t a random 1-out-of-50 scenario for each user. Also, it is quite likely that the fabric that moves content around from physical server to physical server for dynamically spinning up additional resources for a particular farm also plays a part as does things like caching for SQL. Just because a WFE makes a SQL request doesn’t mean that the request actually hits a disk as I’m sure you’re aware. So whether the content is actually cached on a WFE or not, I think the “delays” in your estimation are much more theoretical than actual. And the distributed cache between WFEs requires a 1ms response time between nodes. This means two requests more than a couple of ms apart are likely dipping into the distributed cache for some of the same content. There’s likely many things mitigating the issues you are concerned about. Give Microsoft credit for having thought about this basic conundrum.

(My Author summary would be similar to yours as I have been working with IIS and SharePoint since WSS 3.0 and have created solutions and farm installations/configurations for many years as well as building virtual farms in Hyper-V and VMWare, created SQL clusters, and built many back offices.)

Hi Sir Poon,

First, thanks for taking the time to read and leave such a well-worded and thought-out comment.

I had a meeting with Scott Stewart this evening to work on a performance session we’re co-presenting at the SharePoint Conference North America in about a month. Scott is the Senior Program Manager for ODSP SPARC Engineering at Microsoft, and he’s uniquely qualified to weigh-in on this topic. I pointed him to my blog post, as well as the questions/concerns you voiced.

In not so many words, Scott verified the understanding that I offered of Object Caching and the role it plays (or seemingly *doesn’t* play) in SPO. As I pointed out in my post, a SharePoint Online tenant has a variable number of WFEs. My example citing 50 WFEs was actually on the low end; the average tenant tends to average between 100 and 200 operational WFEs. And it’s true that load balancers sit in front of those WFEs, but affinity of any sort is not enabled … so each request by a user to the environment has an equal chance of being routed to any of the WFEs in for the tenant.

Given that an SPO tenant tends to have thousands of members, the Object Cache in the average tenant is rendered effectively useless. This does translate to increased SQL Server requests. And while SQL caching mechanisms may work to offset the impact a bit, it doesn’t change the fact that SharePoint’s own Object Cache doesn’t help (and that SQL has work to do to make up for that).

The delays that result from this are more than theoretical; they form the basis of the number-one slowdown that Microsoft sees in customer tenants; i.e., the use of structural navigation. Structural navigation is so “evil” for SPO sites that Microsoft is in the process of revising documentation to illuminate just how bad it is for SPO sites. Without the benefit of the Object Cache to store sitemaps, the frequent computation of navigational structures to build sitemaps is a constant and painful drain on sites using structural navigation.

One more thing I want to point out: you mention the “fabric” and Distributed Cache a couple of times in your post. SharePoint’s Distributed Cache is separate and distinct from the Object Cache, and it stores different data from the Object Cache.

So, the guidance you would get from Scott’s team (when it comes to the Object Cache) is along the same lines as what I laid-out: don’t use structural navigation, avoid Content-by-Query Web Parts (CQWP), make use of managed navigation (or search-driven navigation), and use the Content Search Web Part whenever possible to replace the CQWP.

I hope this helps!