My adventures in SharePoint 2013 App Model Land have been going pretty well, but I recently encountered a limitation that left me sort of scratching my head.

My adventures in SharePoint 2013 App Model Land have been going pretty well, but I recently encountered a limitation that left me sort of scratching my head.

The limitation applies to the creation of custom actions for SharePoint apps. To be more specific: the problem I’ve encountered is that there doesn’t appear to be a way to package and reference (using relative links) custom images for ribbon buttons like the one that’s circled in the image above and to the left. This doesn’t mean that custom images can’t be used, of course, but the work-around isn’t exactly something I’m particularly fond of (nor is it even feasible) in some application scenarios.

If you’re not familiar with the new SharePoint 2013 App Model, then you may want to do a little reading before proceeding with this post. I’m only going to cover the App Model concepts that are relevant to the limitation I observed and how to address/work-around it. However, if you are familiar with the new 2013 App Model and creating custom actions in SharePoint 2010, then you may want to jump straight down to the section titled Where the Headaches Begin.

One more warning: this post does some heavy digging into SharePoint’s internal processing of custom ribbon actions and URL tokens. If you want to skip all of that and head straight to the practical take-away, jump down to the What About the Image32by32 and Image16by16 Attributes section.

Adding a Ribbon Custom Action

First, let me do a quick run-through on custom actions. They aren’t unique to SharePoint 2013 or its new “Cloud App Model.” In fact, the type of custom action I’m talking about (i.e., extending the ribbon) became available when the Ribbon was introduced with SharePoint 2010.

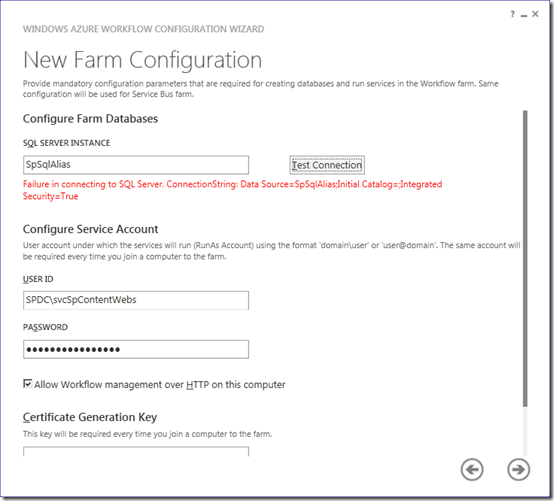

With a SharePoint 2013 App, adding a new button to the ribbon is a relatively simple affair. It starts with choosing the Ribbon Custom Action option from the Add New Item dialog as shown below and to the left. Once a name is provided for the custom action and the Add button is clicked, the Create Custom Action for Ribbon dialog appears as shown below and to the right. There’s a third dialog page that further assists in setting some properties for a custom action, but I’m going to skip over it since it isn’t relevant to the point I’m trying to make.

I want to call attention to one of the selections I made on the Create Custom Action for Ribbon dialog, though; specifically, the decision to expose the custom action in the Host Web rather than in the App Web.

Why is this choice so important? Well, the new App Model enforces a relatively strict boundary of separation between SharePoint sites and any custom applications (running under the new App Model) that they may contain. A SharePoint site (Host Web) can technically “host” applications, but those applications operate in an isolated App Web that may have components running on an entirely different server. Under the new App Model, no custom app code is running in the Host Web.

App Webs (where custom applications exist after installation) don’t have direct access to the Host Web in which they’re contained, either. In fact, App Webs are logically isolated from their Host Web parents. If App Webs want to communicate with their Host Web parent to interact with site collection data, for example, they have to do so through SharePoint’s Client-Side Object Model (CSOM) or the Representational State Transfer (REST) interface. The old full-trust, server-side object isn’t available; everything is “client-side.”

There are some exceptions to this model of isolation, and one of those exceptions is the use of custom actions to allow an App (residing in an App Web) to partially wire itself into the Host Web. The Create Custom Action for Ribbon dialog shown above, for instance, adds a new button to the ribbon for each of the Document Libraries in the Host Web. This gives users a way to navigate directly from Document Libraries (in the Host Web) to a page in the App Web, for example.

The Elements.xml file that gets generated for the custom action once the Visual Studio wizard has finished running looks something like the following:

[sourcecode language=”XML” autolinks=”false”]

<?xml version="1.0" encoding="utf-8"?>

<Elements xmlns="http://schemas.microsoft.com/sharepoint/">

<CustomAction Id="1470c964-6b8a-4d79-9817-4d32c898ffbe.RibbonCustomAction1"

RegistrationType="List"

RegistrationId="101"

Location="CommandUI.Ribbon"

Sequence="10001"

Title="Invoke 'LibraryDetailsCustomAction' action">

<CommandUIExtension>

<!–

Update the UI definitions below with the controls and the command actions

that you want to enable for the custom action.

–>

<CommandUIDefinitions>

<CommandUIDefinition Location="Ribbon.Library.Actions.Controls._children">

<Button Id="Ribbon.Library.Actions.LibraryDetailsCustomActionButton"

Alt="Examine Library Details"

Sequence="100"

Command="Invoke_LibraryDetailsCustomActionButtonRequest"

LabelText="Examine Library Details"

TemplateAlias="o1"

Image32by32="_layouts/15/images/placeholder32x32.png"

Image16by16="_layouts/15/images/placeholder16x16.png" />

</CommandUIDefinition>

</CommandUIDefinitions>

<CommandUIHandlers>

<CommandUIHandler Command="Invoke_RibbonCustomAction1ButtonRequest"

CommandAction="LibraryManager\Pages\LibraryDetails.aspx"/>

</CommandUIHandlers>

</CommandUIExtension >

</CustomAction>

</Elements>

[/sourcecode]

Deploying the App that contains the custom action markup shown above creates a new button in the ribbon of each Host Web Document Library. By default, each button looks like the following:

There are a few attributes in the previous XML that I’m going to repeatedly come back to, so it’s worth taking a closer look at each one’s purpose and associated value(s):

- Image32by32 and Image16by16 for the <Button /> element. These two attributes specify the images that are used when rendering the custom action button on the ribbon. By default, they point to an orange dot placeholder image that lives in the farm’s _layouts folder.

- CommandAction for the <CommandUIHandler /> element. In its simplest form, this is the URL of the page to which the user is redirected upon pressing the custom ribbon button.

The Problem with the Default CommandAction

When a user clicks on a custom ribbon button in one of the Host Web document libraries, the goal is to send them over to a page in the App Web where the custom action can be processed. Unfortunately, the default CommandAction isn’t set up in a way that permits this.

[sourcecode language=”XML” autolinks=”false”]

CommandAction="LibraryManager\Pages\LibraryDetails.aspx"

[/sourcecode]

In fact, attempting to deploy the solution to Office 365 with this default CommandAction results in failure; the App package doesn’t pass validation.

To understand why the failure occurs, it’s important to remember the isolation that exists between the Host Web and the App Web. To illustrate how the Host Web and App Web are different from simply a hostname perspective, consider the project I’ve been working on as an example:

Notice that although the /sites/dev2 relative path portion is the same for both the Host Web and App Web URLs, the hostname portion of each URL is different. This is by design, and it helps to enforce the logical separation between the Host Web and App Web – even though the App Web technically resides within the Host Web.

Looking again at the default CommandAction attribute reveals that its value is just an ASPX page that is identified with a relative URL. Rather than pointing to where we want it to point …

[sourcecode language=”XML” autolinks=”false”]

https://mcdonough-bc920dbeb7ecd3.sharepoint.com/sites/dev2/LibraryManager/Pages/LibraryDetails.aspx

[/sourcecode]

… it ends up pointing to a non-existent destination in the Host Web:

[sourcecode language=”XML” autolinks=”false”]

https://mcdonough.sharepoint.com/sites/dev2/LibraryManager/Pages/LibraryDetails.aspx

[/sourcecode]

And this is exactly what should happen. After all, the custom action is launched from within the Host Web, so a relative path specification should resolve to a location in the Host Web – not the location we actually want to target in the App Web.

Fixing the CommandAction

Thankfully, it isn’t a major undertaking to correct the CommandAction attribute value so that it points to the App Web instead of the Host Web. If you’ve worked with SharePoint at all in the past, then you may know that the key to making everything work (in this situation) is the judicious use of tokens.

Thankfully, it isn’t a major undertaking to correct the CommandAction attribute value so that it points to the App Web instead of the Host Web. If you’ve worked with SharePoint at all in the past, then you may know that the key to making everything work (in this situation) is the judicious use of tokens.

What are tokens? In this case, tokens are specific string sequences that SharePoint parses at run-time and replaces with a value based on the run-time environment, action that was performed, associated list, or some other context-sensitive value that isn’t known at design-time.

To illustrate how this works, consider the default CommandAction attribute:

[sourcecode language=”XML” autolinks=”false”]

CommandAction="LibraryManager\Pages\LibraryDetails.aspx"

[/sourcecode]

Modifying the attribute as follows changes the destination URL of the button so that the user is redirected to the desired page in the App Web rather than the Host Web:

[sourcecode language=”XML” autolinks=”false”]

CommandAction="~appWebUrl/Pages/LibraryDetails.aspx"

[/sourcecode]

The ~appWebUrl token is replaced at run-time with the actual URL of the associated App Web (https://mcdonough-bc920dbeb7ecd3.sharepoint.com/sites/dev2) to build the desired destination link.

SharePoint defines a whole host of URL strings and tokens for use in Apps. As it turns out, a fairly complete list has been aggregated and defined in a handy little page on MSDN. Thanks to the always-helpful Andrew Clark for pointing this out to me; I hadn’t realized Microsoft had pulled so many tokens together in one place!

Where the Headaches Begin

Since tokens are the key to inserting context-dependent values at run-time, you’d think they’d have been implemented and usable anywhere a developer needs to cross the Host Web / App Web divide.

Since tokens are the key to inserting context-dependent values at run-time, you’d think they’d have been implemented and usable anywhere a developer needs to cross the Host Web / App Web divide.

Apparently not. To be more specific (and fair), I should instead say “not consistently.”

Since this blog post is about image limitations with custom ribbon buttons, you can probably guess where I’m headed with all of this. So, let’s take a look at the Image16by16 and Image32by32 attributes.

By default, the Image16x16 and Image32by32 attributes point to a location in the _layouts folder for the farm. Each attribute value references an image that is nothing more than a little round orange dot:

[sourcecode language=”XML” autolinks=”false”]

Image32by32="_layouts/15/images/placeholder32x32.png"

Image16by16="_layouts/15/images/placeholder16x16.png"

[/sourcecode]

Much like the CustomAction attribute, it stands to reason that developers would want to replace the placeholder image attribute values with URLs of their choosing. In my case, I wanted to use a set of images I was deploying with the rest of the application assets in my App Web. So, I updated my image attributes to look like the following:

[sourcecode language=”XML” autolinks=”false”]

Image32by32="~appWebUrl/Images/sharepoint-library-analyzer_32x32-a.png"

Image16by16="~appWebUrl/Images/sharepoint-library-analyzer_16x16-a.png"

[/sourcecode]

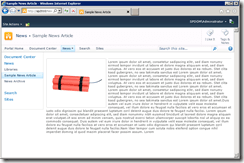

I deployed my App to my Office 365 Preview tenant, watched my browser launch into my App Web, hopped back to the Host Web, navigated to a document library, and looked at the toolbar. I was not happy by what I saw (on the left).

I deployed my App to my Office 365 Preview tenant, watched my browser launch into my App Web, hopped back to the Host Web, navigated to a document library, and looked at the toolbar. I was not happy by what I saw (on the left).

The image I had specified for use by the button wasn’t being used. All I had was a broken image link.

Examining the properties for the broken image quickly confirmed my fear: the ~appWebUrl token was not being processed for either of the Image32by32 or Image16by16 attributes. The token was being output directly into the image references.

I tried changing the image attributes to reference the App Web a couple of different ways (and with a couple of different tokens), but none of them seemed to work.

I did a little digging, and I saw that Chris Hopkins (over at Microsoft) covered this very topic for sandboxed solutions in SharePoint 2010. In Chris’ article, though, it was clear that tokens such as ~site and ~sitecollection were valid for use by the Image32by32 and Image16by16 attributes.

To see if I was losing my mind, I decided to try a little experiment. Although I knew it wouldn’t solve my particular problem, I decided to try using the ~site token just to see if it would be parsed properly. Lo and behold, it was parsed and replaced. ~site worked. So, ~site worked … but ~appWebUrl didn’t?

That didn’t make any sense. If it isn’t possible to use the ~appWebUrl token, how are developers supposed to reference custom images for the buttons they deploy in their Apps? Without the ~appWebUrl, there’s no practical way to reference an item in the App Web from the Host Web.

Token Forensics

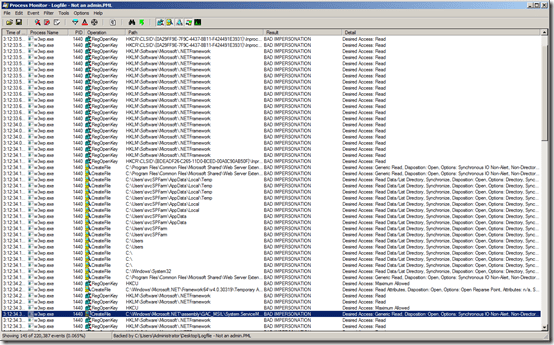

When I find myself in situations where I’m holding results that don’t make sense, I can’t help myself: I pull out Reflector and start poking around for clues inside SharePoint’s plumbing. If I dig really hard, sometimes I find answers to my questions.

After some poking around with Reflector, I discovered that the “journey to enlightenment” (in this case) started with the RegisterCommandUIWithRibbon method on the SPCustomActionElement type. It is in this method that the Image16by16 and Image32by32 attributes are read-in from the XML file in which they are defined. Before assignment for use, they’re passed through a couple of methods that carry out token parsing:

After some poking around with Reflector, I discovered that the “journey to enlightenment” (in this case) started with the RegisterCommandUIWithRibbon method on the SPCustomActionElement type. It is in this method that the Image16by16 and Image32by32 attributes are read-in from the XML file in which they are defined. Before assignment for use, they’re passed through a couple of methods that carry out token parsing:

- ReplaceUrlTokens on the SPCustomActionElement type

- UrlFromPrefixedUrlCore on the the SPUtility type

Although these methods together are capable of recognizing and replacing many different token types (including some I hadn’t seen listed in existing documentation; e.g., ~siteCollectionLayouts), none of the new SharePoint 2013 tokens, like the ~appWebUrl and ~remoteWebUrl ~remoteAppUrl tokens, appear in these methods.

Interestingly enough, I didn’t see any noteworthy differences between the path of execution for processing image attributes and the sequence of calls through which CommandAction attributes are handled in the RegisterCommandUIExtension method of the SPRibbon type. The RegisterCommandUIExtension method eventually “punches down” to the ReplaceUrlTokens and UrlFromPrefixedUrlCore methods, as well.

The differences I was seeing in how tokens were handled between the CommandAction and Image32by32/Image16by16 attributes had to be originating somewhere else – not in the processing of the custom action XML.

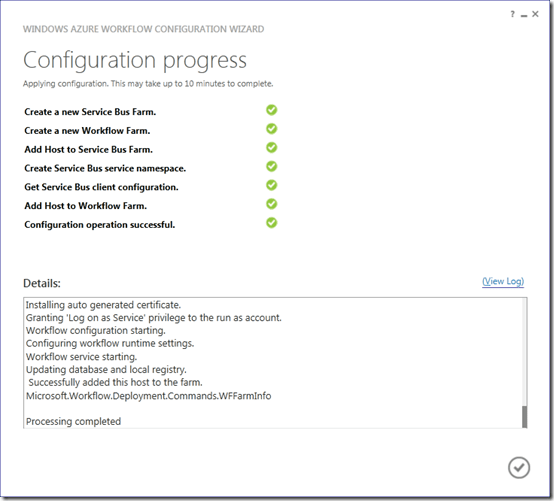

Deployment Modifications

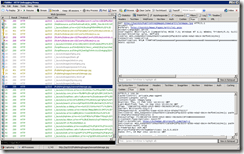

After some more digging in Reflector to determine where the ~appWebUrl actually showed-up and was being processed, I came across evidence suggesting that “something special” was happening on App deployment rather than at run-time. The ~appWebUrl token was being processed as part of a BuildTokenMap call in the SPAppInstance type; looking at the call chain for the BuildTokenMap method revealed that it was getting called during some App deployment operations processing.

If changes were taking place on App deployment, then I had a hunch I might find what I was looking for in the content database housing the Host Web to which my App was being deployed. After all, Apps get deployed to App Webs that reside within a Host Web, and Host Webs live in content databases … so, all of the pieces of my App had to exist (in some form) in the content database.

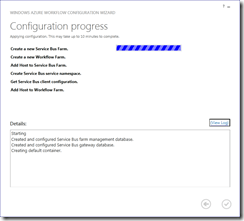

I fired-up Visual Studio, stopped deploying to Office 365, and started deploying my App to a site collection on my local SharePoint 2013 VM farm. Once my App was deployed, I launched SQL Management Studio on the SQL Server housing the SharePoint databases and began poking around inside the content database where the target site collection was located.

Brief aside: standard rules still apply in SharePoint 2013, so I’ll mention them here for those who may not know them. Don’t poke around inside content databases (or any other databases) in live SharePoint environments you care about. As with previous versions, querying and working against live databases may hurt performance and lead to bigger problems. If you want to play with the contents of a SharePoint database, either create a SQL snapshot of it (and work against the snapshot) or mount a backup copy of the database in a test environment.

I wasn’t sure what I was looking for, so I quickly examined the contents of each table in the content database. I hit paydirt when I opened-up the CustomActions table. It had a single row, and the Properties field of that row contained some XML that looked an awful lot like the Elements.xml which defined my custom action:

[sourcecode language=”XML” autolinks=”false”]

<?xml version="1.0" encoding="utf-16"?>

<Elements xmlns="http://schemas.microsoft.com/sharepoint/">

<CustomAction Title="Invoke ‘LibraryDetailsCustomAction’ action" Id="4f835c73-a3ab-4671-b142-83304da0639f.LibraryDetailsCustomAction" Location="CommandUI.Ribbon" RegistrationId="101" RegistrationType="List" Sequence="10001">

<CommandUIExtension xmlns="http://schemas.microsoft.com/sharepoint/">

<!–

Update the UI definitions below with the controls and the command actions

that you want to enable for the custom action.

–>

<CommandUIDefinitions>

<CommandUIDefinition Location="Ribbon.Library.Actions.Controls._children">

<Button Id="Ribbon.Library.Actions.LibraryDetailsCustomActionButton" Alt="Examine Library Details" Sequence="100" Command="Invoke_LibraryDetailsCustomActionButtonRequest" LabelText="Examine Library Details" Image16by16="~site/Images/sharepoint-library-analyzer_16x16-a.png" Image32by32="~appWebUrl/Images/sharepoint-library-analyzer_32x32-a.png" TemplateAlias="o1"/>

</CommandUIDefinition>

</CommandUIDefinitions>

<CommandUIHandlers>

<CommandUIHandler Command="Invoke_LibraryDetailsCustomActionButtonRequest" CommandAction="javascript:LaunchApp(‘709d9f25-bb39-4e6a-97d5-6e1d7c855f38’, ‘i:0i.t|ms.sp.int|a441fa2c-8c5f-4152-9085-3930239ab21b@9db0b916-0dd6-4d6c-be49-41f72f5dfc02’, ‘~appWebUrl\u002fPages\u002fLibraryDetails.aspx?ListID={ListId}\u0026SiteUrl={SiteUrl}’, null);"/>

</CommandUIHandlers>

</CommandUIExtension>

</CustomAction>

</Elements>

[/sourcecode]

There were some differences, though, between the Elements.xml I had defined earlier and what actually appeared in the Properties field. I narrowed my focus to the differences that existed between the non-working Image32by32/Image16by16 attributes

[sourcecode language=”XML” autolinks=”false”]

Image16by16="~appWebUrl/Images/sharepoint-library-analyzer_16x16-a.png"

Image32by32="~appWebUrl/Images/sharepoint-library-analyzer_32x32-a.png"

[/sourcecode]

… and the CommandAction attribute.

[sourcecode language=”XML” autolinks=”false”]

CommandAction="javascript:LaunchApp(‘709d9f25-bb39-4e6a-97d5-6e1d7c855f38’, ‘i:0i.t|ms.sp.int|a441fa2c-8c5f-4152-9085-3930239ab21b@9db0b916-0dd6-4d6c-be49-41f72f5dfc02’, ‘~appWebUrl\u002fPages\u002fLibraryDetails.aspx’, null);"

[/sourcecode]

As suspected, some deployment-time processing had been performed on the CommandAction attribute but not on the image attributes. The CommandAction still contained an ~appWebUrl token, but it was wrapped as part of a parameter call to a LaunchApp JavaScript function that appeared to be handled (or rather, executed) from a client-side browser.

Jumping into my App in Internet Explorer and opening IE’s debugging tools via <F12>, I did a search for the LaunchApp function within the referenced scripts and found it in the core.js library/script. Examining the LaunchApp function revealed that it called the LaunchAppInternal function; LaunchAppInternal, in turn, called back to the SharePoint server’s /_layouts/15/appredirect.aspx page with the parameters that were supplied to the original LaunchApp method – including the URL with the ~appWebUrl token.

To complete the journey, I opened up the Microsoft.SharePoint.ApplicationPages.dll assembly back on the server and dug into the AppRedirectPage class that provides the code-behind support for the AppRedirect.aspx page. When the AppRedirect.aspx page is loaded, control passes to the page’s OnLoad event and then to the HandleRequest method. HandleRequest then uses the ReplaceAppTokensAndFixLaunchUrl method of the SPTenantAppUtils class to process tokens.

The ReplaceAppTokensAndFixLaunchUrl method is noteworthy because it includes parsing and replacement support for the ~appWebUrl token, ~remoteWebUrl ~remoteAppUrl token, and other tokens that were introduced with SharePoint 2013. The deployment-time processing that is performed on the CommandAction attribute is what ultimately wires-up the CommandAction to the ReplaceAppTokensAndFixLaunchUrl method. The Image32by32 and Image16by16 attributes don’t get this treatment, and so the new 2013 tokens (like ~appWebUrl) can’t be used by these attributes.

What About the Image32by32 and Image16by16 Attributes?

Now that some of the key differences in processing between the CommandAction attribute and image attributes have been identified, let me jump back to the original problem. Is there anything that can be done with the Image32by32 and Image16by16 attributes that are specified in a custom action to get them to reference assets that exist in the App Web? Since tokens like ~appWebUrl

Now that some of the key differences in processing between the CommandAction attribute and image attributes have been identified, let me jump back to the original problem. Is there anything that can be done with the Image32by32 and Image16by16 attributes that are specified in a custom action to get them to reference assets that exist in the App Web? Since tokens like ~appWebUrl (and ~remoteWebUrl for all you Autohosted and Provider-hosted application builders) aren’t parsed and processed, are there alternatives?

My response is a somewhat wishy-washy “doubtful.” In my estimation, you’d need to hack SharePoint with something like a javascript: tag for an image attribute (which, interestingly enough, doesn’t appear to be expressly blocked), find some way to obtain the App Web URL base, formulate the proper path to the image, and more. If it could be done, you’d be gaming SharePoint … and I could easily see a cumulative update or service pack breaking this type of elaborate work-around.

The safest and most pragmatic way to handle this situation, it seems, is to use absolute URLs for the desired image resources and forget about deploying them to the App Web altogether. For example, I placed the images I was trying to use on the ribbon buttons here on my blog and referenced them as follows:

[sourcecode language=”XML” autolinks=”false”]

Image16by16="http://sharepointinterface.com/wp-content/uploads/2013/01/sharepoint-library-analyzer_16x16-a.png"

Image32by32="http://sharepointinterface.com/wp-content/uploads/2013/01/sharepoint-library-analyzer_32x32-a.png"

[/sourcecode]

I had some initial concerns that I might inadvertently bump into some security boundaries, such as those that sometimes arise when an asset is referenced via HTTP from a site that is being served up under HTTPS. This didn’t prove to be the case, however. I tested the use of absolute URLs in both my development VM environment (served up under HTTP) and through one of my Office 365 Preview site collections (accessed via HTTPS), and no browser security warnings popped up. The target image appeared on the custom button as desired (shown on the left) in both cases.

I had some initial concerns that I might inadvertently bump into some security boundaries, such as those that sometimes arise when an asset is referenced via HTTP from a site that is being served up under HTTPS. This didn’t prove to be the case, however. I tested the use of absolute URLs in both my development VM environment (served up under HTTP) and through one of my Office 365 Preview site collections (accessed via HTTPS), and no browser security warnings popped up. The target image appeared on the custom button as desired (shown on the left) in both cases.

Although the use of absolute URLs will work in many cases, I have to admit that I’m still not a big fan of this approach – especially for SharePoint-hosted apps like the one I’ve been working on. Even though Office 365 entails an “always connected” scenario, I can easily envision on-premises deployment environments that are taken offline some or all of the time. I can also see (and have seen in the past) SharePoint environments where unfettered Internet access is the exception rather than the rule.

In these environments, users won’t see image buttons at all – just blank placeholders or broken image links. After all, without Internet access there is no way to resolve and download the referenced button images.

Wrapping It Up

At some point in the future, I hope that Microsoft considers extending token parsing for URL-based attributes like Image32by32 and Image16by16 to include the ~appWebUrl, ~remoteWebUrl, and other new tokens used by the SharePoint 2013 App Model. In the meantime, though, you should probably consider getting an easily accessible online location (SkyDrive, Dropbox, a blog, etc.) for images and other similar assets if you’re building apps under the new SharePoint 2013 App Model and intend to use custom actions.

Update (1/27/2013)

I need to issue a couple of updates and clarifications. First, I need to be very clear and state that SharePoint-hosted apps were the focus of this post. In a SharePoint-hosted app, what I’ve written is correct: there is no processing of “new” 2013 tokens (like ~appWebUrl and ~remoteAppUrl) for the Image32by32 and Image16by16 attributes. Interestingly enough, though, there does appear to be processing of the ~remoteAppUrl in the Image32by32 and Image16by16 attributes specifically for the other application types such as provider-hosted apps and autohosted apps. Jamie Rance mentioned this in a comment (below), and I verified it with an autohosted app that I quickly spun-up.

I double-checked to see if the ~remoteAppUrl token would even be recognized/processed (despite the lack of a remote web component) for SharePoint-hosted apps, and it is not … nor is ~appWebUrl token processed for autohosted apps. The selective implementation of only the ~remoteAppUrl token for certain app types has me baffled; I hope that we’ll eventually see some clarification or changes. If you’re building provider-hosted or autohosted apps, though, this does give you a way to redirect image requests to your remote web application rather than an absolute endpoint. Thank you, Jamie, for the information!

And now for some good news that for SharePoint-hosted app creators. Prior to writing this post, I had posted a question about the tokens over in the SharePoint Exchange forums. At the time I wrote this post, there hadn’t been any activity to suggest that a solution or workaround existed. F. Aquino recently supplied an incredibly creative answer, though, that involves using a data URI to Base64-encode the images and package them directly into the Image32by32 and Image16by16 attributes themselves! Although this means that some image pre-processing will be required to package images, it gets around the requirement of being “always-connected.” This is an awesome technique, and I’ll certainly be adding it to my arsenal. Thank you, F. Aquino!

References and Resources

- MSDN: How to: Create custom actions to deploy with apps for SharePoint

- MSDN: Apps for SharePoint overview

- MSDN: Customizing and Extending the SharePoint 2010 Server Ribbon

- MSDN: How to: Complete basic operations using SharePoint 2013 client library code

- MSDN: How to: Complete basic operations using SharePoint 2013 REST endpoints

- MSDN: URL strings and tokens in apps for SharePoint

- Twitter: Andrew Clark

- Chris Hopkins’ Visilog: Using images on your ribbon buttons from a sandboxed solution in SharePoint 2010

- Software: Red Gate’s Reflector

- Service: Microsoft’s SkyDrive

- Service: Dropbox

Sometimes it’s hard to believe just how quickly time flies by. At the tail end of last December, I announced that some big changes were coming for me – namely that I would be transitioning into something new from an employment perspective. Today is the last day of normal business in March 2013, and that means my time with Idera is at an end.

Sometimes it’s hard to believe just how quickly time flies by. At the tail end of last December, I announced that some big changes were coming for me – namely that I would be transitioning into something new from an employment perspective. Today is the last day of normal business in March 2013, and that means my time with Idera is at an end.

Although I’m going to miss my friends at Idera and wish them the best of luck going forward, I’m very excited about some things I’ve got cooking – particularly with my new company!

Although I’m going to miss my friends at Idera and wish them the best of luck going forward, I’m very excited about some things I’ve got cooking – particularly with my new company! Things are falling into place with the new company, as well. I applied for membership in Microsoft’s BizSpark program yesterday, and within hours I was accepted – much to my surprise. Why was I surprised? Well, my company website is (at the moment) being redirected to a “coming soon” page I put together on the new Microsoft Azure Web Sites offering. I’ve been waiting for an Office 365 tenant upgrade so that I can build out a proper site on SharePoint 2013, but the upgrade seems to be taking much longer than originally expected …

Things are falling into place with the new company, as well. I applied for membership in Microsoft’s BizSpark program yesterday, and within hours I was accepted – much to my surprise. Why was I surprised? Well, my company website is (at the moment) being redirected to a “coming soon” page I put together on the new Microsoft Azure Web Sites offering. I’ve been waiting for an Office 365 tenant upgrade so that I can build out a proper site on SharePoint 2013, but the upgrade seems to be taking much longer than originally expected …

My adventures in SharePoint 2013 App Model Land have been going pretty well, but I recently encountered a limitation that left me sort of scratching my head.

My adventures in SharePoint 2013 App Model Land have been going pretty well, but I recently encountered a limitation that left me sort of scratching my head.

Thankfully, it isn’t a major undertaking to correct the CommandAction attribute value so that it points to the App Web instead of the Host Web. If you’ve worked with SharePoint at all in the past, then you may know that the key to making everything work (in this situation) is the judicious use of tokens.

Thankfully, it isn’t a major undertaking to correct the CommandAction attribute value so that it points to the App Web instead of the Host Web. If you’ve worked with SharePoint at all in the past, then you may know that the key to making everything work (in this situation) is the judicious use of tokens. Since tokens are the key to inserting context-dependent values at run-time, you’d think they’d have been implemented and usable anywhere a developer needs to cross the Host Web / App Web divide.

Since tokens are the key to inserting context-dependent values at run-time, you’d think they’d have been implemented and usable anywhere a developer needs to cross the Host Web / App Web divide.

Now that some of the key differences in processing between the CommandAction attribute and image attributes have been identified, let me jump back to the original problem. Is there anything that can be done with the Image32by32 and Image16by16 attributes that are specified in a custom action to get them to reference assets that exist in the App Web? Since tokens like ~appWebUrl

Now that some of the key differences in processing between the CommandAction attribute and image attributes have been identified, let me jump back to the original problem. Is there anything that can be done with the Image32by32 and Image16by16 attributes that are specified in a custom action to get them to reference assets that exist in the App Web? Since tokens like ~appWebUrl

Let me start with the most impactful change-up: my full-time role as Chief SharePoint Evangelist for

Let me start with the most impactful change-up: my full-time role as Chief SharePoint Evangelist for

1. Manage Distractions More Effectively. Working at home can be a dual-edged sword. If I were single, unmarried, and better-disciplined, I’d see working at home as the ability to do whatever I wanted without distraction. That’s not the reality in my world, though. Where I can remove distractions, I intend to.

1. Manage Distractions More Effectively. Working at home can be a dual-edged sword. If I were single, unmarried, and better-disciplined, I’d see working at home as the ability to do whatever I wanted without distraction. That’s not the reality in my world, though. Where I can remove distractions, I intend to.  I’ve been doing a bit of build-out with the new SharePoint 2013 Preview in anticipation of some development work, and

I’ve been doing a bit of build-out with the new SharePoint 2013 Preview in anticipation of some development work, and

You’ve undoubtedly heard the news: SharePoint 2013 is coming. The preview is available right now, and you can

You’ve undoubtedly heard the news: SharePoint 2013 is coming. The preview is available right now, and you can

Scalability in the hardware and software space is all about

Scalability in the hardware and software space is all about

Here’s another blog post to file in the “I’ll probably never need it, but you never know” bucket of things you’ve seen from me.

Here’s another blog post to file in the “I’ll probably never need it, but you never know” bucket of things you’ve seen from me.

This post is my way of doing something akin to a SharePoint public service announcement. I’ve recently seen some caching-related functionality and topics – especially the BLOB Cache – getting some real traction in different circles, and I think that the attention and love is generally a good thing. I am somewhat concerned, though, by the fact that the discussions and projects that have been surfacing don’t seem to say much beyond the Post-It on the right.

This post is my way of doing something akin to a SharePoint public service announcement. I’ve recently seen some caching-related functionality and topics – especially the BLOB Cache – getting some real traction in different circles, and I think that the attention and love is generally a good thing. I am somewhat concerned, though, by the fact that the discussions and projects that have been surfacing don’t seem to say much beyond the Post-It on the right.

I hope the example featuring Wiley did an adequate job of explaining why I think that blindly turning on the BLOB Cache can be a bad thing for end users. Having seen first-hand what an improperly configured BLOB Cache can do to the user experience, I’d like to offer up a handful of suggestions based on my own experience.

I hope the example featuring Wiley did an adequate job of explaining why I think that blindly turning on the BLOB Cache can be a bad thing for end users. Having seen first-hand what an improperly configured BLOB Cache can do to the user experience, I’d like to offer up a handful of suggestions based on my own experience.

I don’t know how 2011 ended for most of you, but the year closed without much of a bang for me. I’m not complaining about that; the general slow-down gave me an opportunity to get caught up on a few things, and it was nice to spend some quality time with my friends and family.

I don’t know how 2011 ended for most of you, but the year closed without much of a bang for me. I’m not complaining about that; the general slow-down gave me an opportunity to get caught up on a few things, and it was nice to spend some quality time with my friends and family.