As someone who spends most days working with (and thinking about) SharePoint, there’s one thing I can say without any uncertainty or doubt: Microsoft Teams has taken off like a rocket bound for low Earth orbit. It’s rare these days for me to discuss SharePoint without some mention of Teams.

I’m confident that many of you know the reason for this. Besides being a replacement for Skype, many of Teams’ back-end support systems and dependent service implementations are based in – you guessed it – SharePoint Online (SPO).

As one might expect, any technology product that is rapidly evolving and seeing adoption by the enterprise has gaps that reveal themselves and imperfect implementations as it grows – and Teams is no different. I’m confident that Teams will reach a point of maturity and eventually address all of the shortcomings that people are currently finding, but until it does, there will be those of us who attempt to address gaps we might find with the tools at our disposal.

Administrative Pain

One of those Teams pain points we discussed recently on the Microsoft Community Office Hours webcast was the challenge of changing ownership for a large numbers of Teams at once. We took on a question from Mark Diaz who posed the following:

May I ask how do you transfer the ownership of all Teams that a user is managing if that user is leaving the company? I know how to change the owner of the Teams via Teams admin center if I know already the Team that I need to update. Just consulting if you do have an easier script to fetch what teams he or she is an owner so I can add this to our SOP if a user is leaving the company.

Mark Diaz

We discussed Mark’s question (amidst our normal joking around) and posited that PowerShell could provide an answer. And since I like to goof around with PowerShell and scripting, I agreed to take on Mark’s question as “homework” as seen below:

The rest of this post is my direct response to Mark’s question and request for help. I hope this does the trick for you, Mark!

Teams PowerShell

Anyone who has spent any time as an administrator in the Microsoft ecosystem of cloud offerings knows that Microsoft is very big on automating administrative tasks with PowerShell. And being a cloud workload in that ecosystem, Teams is no different.

Microsoft Teams has it’s own PowerShell module, and this can be installed and referenced in your script development environment in a number of different ways that Microsoft has documented. And this MicrosoftTeams module is a prerequisite for some of the cmdlets you’ll see me use a bit further down in this post.

The MicrosoftTeams module isn’t the only way to work with Teams in PowerShell, though. I would have loved to build my script upon the Microsoft Graph PowerShell module … but it’s still in what is termed an “early preview” release. Given that bit of information, I opted to use the “older but safer/more mature” MicrosoftTeams module.

The Script: ReplaceTeamsOwners.ps1

Let me just cut to the chase. I put together my ReplaceTeamOwners.ps1 script to address the specific scenario Mark Diaz asked about. The script accepts a handful of parameters (this next bit lifted straight from the script’s internal documentation):

.PARAMETER currentTeamOwner

A string that contains the UPN of the user who will be replaced in the

ownership changes. This property is mandatory. Example: bob@EvilCorp.com

.PARAMETER newTeamOwner

A string containing the UPN of the user who will be assigned at the new

owner of Teams teams (i.e., in place of the currentTeamOwner). Example

jane@AcmeCorp.com.

.PARAMETER confirmEachUpdate

A switch parameter that if specified will require the user executing the

script to confirm each ownership change before it happens; helps to ensure

that only the changes desired get made.

.PARAMETER isTest

A boolean that indicates whether or not the script will actually be run against

and/or make changes Teams teams and associated structures. This value defaults

to TRUE, so actual script runs must explicitly set isTest to FALSE to affect

changes on Teams teams ownership.

So both currentTeamOwner and newTeamOwner must be specified, and that’s fairly intuitive to understand. If the -confirmEachUpdate switch is supplied, then for each possible ownership change there will be a confirmation prompt allowing you to agree to an ownership change on a case-by-case basis.

The one parameter that might be a little confusing is the script’s isTest parameter. If unspecified, this parameter defaults to TRUE … and this is something I’ve been putting in my scripts for ages. It’s sort of like PowerShell’s -WhatIf switch in that it allows you to understand the path of execution without actually making any changes to the environment and targeted systems/services. In essence, it’s basically a “dry run.”

The difference between my isTest and PowerShell’s -WhatIf is that you have to explicitly set isTest to FALSE to “run the script for real” (i.e., make changes) rather than remembering to include -WhatIf to ensure that changes aren’t made. If someone forgets about the isTest parameter and runs my script, no worries – the script is in test mode by default. My scripts fail safe and without relying on an admin’s memory, unlike -WhatIf.

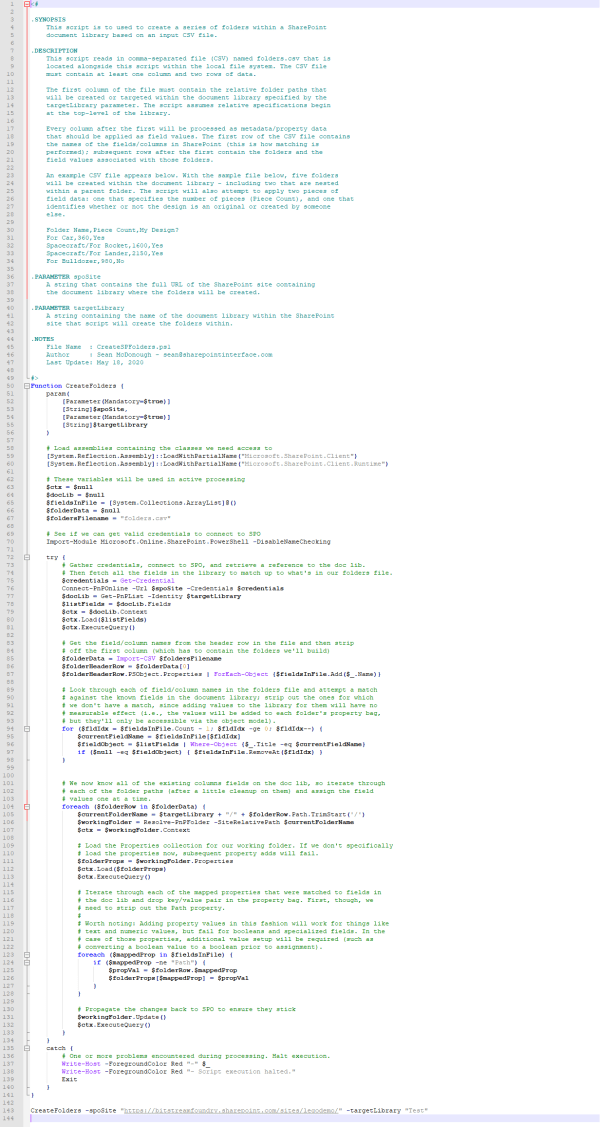

And now … the script!

<#

.SYNOPSIS

This script is used to replace all instances of a Teams team owner with the

identity of another account. This might be necessary in situations where a

user leaves an organization, administrators change, etc.

.DESCRIPTION

Anytime a Microsoft Teams team is created, an owner must be associated with

it. Oftentimes, the team owner is an administrator or someone who has no

specific tie to the team.

Administrators tend to change over time; at the same time, teams (as well as

other IT "objects", like SharePoint sites) undergo transitions in ownership

as an organization evolves.

Although it is possible to change the owner of Microsoft Teams team through

the M365 Teams console, the process only works for one site at a time. If

someone leaves an organization, it's often necessary to transfer all objects

for which that user had ownership.

That's what this script does: it accepts a handful of parameters and provides

an expedited way to transition ownership of Teams teams from one user to

another very quickly.

.PARAMETER currentTeamOwner

A string that contains the UPN of the user who will be replaced in the

ownership changes. This property is mandatory. Example: bob@EvilCorp.com

.PARAMETER newTeamOwner

A string containing the UPN of the user who will be assigned at the new

owner of Teams teams (i.e., in place of the currentTeamOwner). Example

jane@AcmeCorp.com.

.PARAMETER confirmEachUpdate

A switch parameter that if specified will require the user executing the

script to confirm each ownership change before it happens; helps to ensure

that only the changes desired get made.

.PARAMETER isTest

A boolean that indicates whether or not the script will actually be run against

and/or make changes Teams teams and associated structures. This value defaults

to TRUE, so actual script runs must explicitly set isTest to FALSE to affect

changes on Teams teams ownership.

.NOTES

File Name : ReplaceTeamsOwners.ps1

Author : Sean McDonough - sean@sharepointinterface.com

Last Update: September 2, 2020

#>

Function ReplaceOwners {

param(

[Parameter(Mandatory=$true)]

[String]$currentTeamsOwner,

[Parameter(Mandatory=$true)]

[String]$newTeamsOwner,

[Parameter(Mandatory=$false)]

[Switch]$confirmEachUpdate,

[Parameter(Mandatory=$false)]

[Boolean]$isTest = $true

)

# Perform a parameter check. Start with the site spec.

Clear-Host

Write-Host ""

Write-Host "Attempting prerequisite operations ..."

$paramCheckPass = $true

# First - see if we have the MSOnline module installed.

try {

Write-Host "- Checking for presence of MSOnline PowerShell module ..."

$checkResult = Get-InstalledModule -Name "MSOnline"

if ($null -ne $checkResult) {

Write-Host " - MSOnline module already installed; now importing ..."

Import-Module -Name "MSOnline" | Out-Null

}

else {

Write-Host "- MSOnline module not installed. Attempting installation ..."

Install-Module -Name "MSOnline" | Out-Null

$checkResult = Get-InstalledModule -Name "MSOnline"

if ($null -ne $checkResult) {

Import-Module -Name "MSOnline" | Out-Null

Write-Host " - MSOnline module successfully installed and imported."

}

else {

Write-Host ""

Write-Host -ForegroundColor Yellow " - MSOnline module not installed or loaded."

$paramCheckPass = $false

}

}

}

catch {

Write-Host -ForegroundColor Red "- Unexpected problem encountered with MSOnline import attempt."

$paramCheckPass = $false

}

# Our second order of business is to make sure we have the PowerShell cmdlets we need

# to execute this script.

try {

Write-Host "- Checking for presence of MicrosoftTeams PowerShell module ..."

$checkResult = Get-InstalledModule -Name "MicrosoftTeams"

if ($null -ne $checkResult) {

Write-Host " - MicrosoftTeams module installed; will now import it ..."

Import-Module -Name "MicrosoftTeams" | Out-Null

}

else {

Write-Host "- MicrosoftTeams module not installed. Attempting installation ..."

Install-Module -Name "MicrosoftTeams" | Out-Null

$checkResult = Get-InstalledModule -Name "MicrosoftTeams"

if ($null -ne $checkResult) {

Import-Module -Name "MicrosoftTeams" | Out-Null

Write-Host " - MicrosoftTeams module successfully installed and imported."

}

else {

Write-Host ""

Write-Host -ForegroundColor Yellow " - MicrosoftTeams module not installed or loaded."

$paramCheckPass = $false

}

}

}

catch {

Write-Host -ForegroundColor Yellow "- Unexpected problem encountered with MicrosoftTeams import attempt."

$paramCheckPass = $false

}

# Have we taken care of all necessary prerequisites?

if ($paramCheckPass) {

Write-Host -ForegroundColor Green "Prerequisite check passed. Press to continue."

Read-Host

} else {

Write-Host -ForegroundColor Red "One or more prerequisite operations failed. Script terminating."

Exit

}

# We can now begin. First step will be to get the user authenticated to they can actually

# do something (and we'll have a tenant context)

Clear-Host

try {

Write-Host "Please authenticate to begin the owner replacement process."

$creds = Get-Credential

Write-Host "- Credentials gathered. Connecting to Azure Active Directory ..."

Connect-MsolService -Credential $creds | Out-Null

Write-Host "- Now connecting to Microsoft Teams ..."

Connect-MicrosoftTeams -Credential $creds | Out-Null

Write-Host "- Required connections established. Proceeding with script."

# We need the list of AAD users to validate our target and replacement.

Write-Host "Retrieving list of Azure Active Directory users ..."

$currentUserUPN = $null

$currentUserId = $null

$currentUserName = $null

$newUserUPN = $null

$newUserId = $null

$newUserName = $null

$allUsers = Get-MsolUser

Write-Host "- Users retrieved. Validating ID of current Teams owner ($currentTeamsOwner)"

$currentAADUser = $allUsers | Where-Object {$_.SignInName -eq $currentTeamsOwner}

if ($null -eq $currentAADUser) {

Write-Host -ForegroundColor Red "- Current Teams owner could not be found in Azure AD. Halting script."

Exit

}

else {

$currentUserUPN = $currentAADUser.UserPrincipalName

$currentUserId = $currentAADUser.ObjectId

$currentUserName = $currentAADUser.DisplayName

Write-Host " - Current user found. Name='$currentUserName', ObjectId='$currentUserId'"

}

Write-Host "- Now Validating ID of new Teams owner ($newTeamsOwner)"

$newAADUser = $allUsers | Where-Object {$_.SignInName -eq $newTeamsOwner}

if ($null -eq $newAADUser) {

Write-Host -ForegroundColor Red "- New Teams owner could not be found in Azure AD. Halting script."

Exit

}

else {

$newUserUPN = $newAADUser.UserPrincipalName

$newUserId = $newAADUser.ObjectId

$newUserName = $newAADUser.DisplayName

Write-Host " - New user found. Name='$newUserName', ObjectId='$newUserId'"

}

Write-Host "Both current and new users exist in Azure AD. Proceeding with script."

# If we've made it this far, then we have valid current and new users. We need to

# fetch all Teams to get their associated GroupId values, and then examine each

# GroupId in turn to determine ownership.

$allTeams = Get-Team

$teamCount = $allTeams.Count

Write-Host

Write-Host "Begin processing of teams. There are $teamCount total team(s)."

foreach ($currentTeam in $allTeams) {

# Retrieve basic identification information

$groupId = $currentTeam.GroupId

$groupName = $currentTeam.DisplayName

$groupDescription = $currentTeam.Description

Write-Host "- Team name: '$groupName'"

Write-Host " - GroupId: '$groupId'"

Write-Host " - Description: '$groupDescription'"

# Get the users associated with the team and determine if the target user is

# currently an owner of it.

$currentIsOwner = $null

$groupOwners = (Get-TeamUser -GroupId $groupId) | Where-Object {$_.Role -eq "owner"}

$currentIsOwner = $groupOwners | Where-Object {$_.UserId -eq $currentUserId}

# Do we have a match for the targeted user?

if ($null -eq $currentIsOwner) {

# No match; we're done for this cycle.

Write-Host " - $currentUserName is not an owner."

}

else {

# We have a hit. Is confirmation needed?

$performUpdate = $false

Write-Host " - $currentUserName is currently an owner."

if ($confirmEachUpdate) {

$response = Read-Host " - Change ownership to $newUserName (Y/N)?"

if ($response.Trim().ToLower() -eq "y") {

$performUpdate = $true

}

}

else {

# Confirmation not needed. Do the update.

$performUpdate = $true

}

# Change ownership if the appropriate flag is set

if ($performUpdate) {

# We need to check if we're in test mode.

if ($isTest) {

Write-Host -ForegroundColor Yellow " - isTest flag is set. No ownership change processed (although it would have been)."

}

else {

Write-Host " - Adding '$newUserName' as an owner ..."

Add-TeamUser -GroupId $groupId -User $newUserUPN -Role owner

Write-Host " - '$newUserName' is now an owner. Removing old owner ..."

Remove-TeamUser -GroupId $groupId -User $currentUserUPN -Role owner

Write-Host " - '$currentUserName' is no longer an owner."

}

}

else {

Write-Host " - No changes in ownership processed for $groupName."

}

Write-Host ""

}

}

# We're done let the user know.

Write-Host -ForegroundColor Green "All Teams processed. Script concluding."

Write-Host ""

}

catch {

# One or more problems encountered during processing. Halt execution.

Write-Host -ForegroundColor Red "-" $_

Write-Host -ForegroundColor Red "- Script execution halted."

Exit

}

}

ReplaceOwners -currentTeamsOwner bob@EvilCorp.com -newTeamsOwner jane@AcmeCorp.com -isTest $true -confirmEachUpdate

Don’t worry if you don’t feel like trying to copy and paste that whole block. I zipped up the script and you can download it here.

A Brief Script Walkthrough

I like to make an admin’s life as simple as possible, so the first part of the script (after the comments/documentation) is an attempt to import (and if necessary, first install) the PowerShell modules needed for execution: MSOnline and MicrosoftTeams.

From there, the current owner and new owner identities are verified before the script goes through the process of getting Teams and determining which ones to target. I believe that the inline comments are written in relatively plain English, and I include a lot of output to the host to spell out what the script is doing each step of the way.

The last line in the script is simply the invocation of the ReplaceOwners function with the parameters I wanted to use. You can leave this line in and change the parameters, take it out, or use the script however you see fit.

Here’s a screenshot of a full script run in my family’s tenant (mcdonough.online) where I’m attempting to see which Teams my wife (Tracy) currently owns that I want to assume ownership of. Since the script is run with isTest being TRUE, no ownership is changed – I’m simply alerted to where an ownership change would have occurred if isTest were explicitly set to FALSE.

Conclusion

So there you have it. I put this script together during a relatively slow afternoon. I tested and ensured it was as error-free as I could make it with the tenants that I have, but I would still test it yourself (using an isTest value of TRUE, at least) before executing it “for real” against your production system(s).

And Mark D: I hope this meets your needs.

References and Resources

- Microsoft: Microsoft Teams

- buckleyPLANET: Microsoft Community Office Hours, Episode 24

- YouTube: Excerpt from Microsoft Community Office Hours Episode 24

- Microsoft Docs: Microsoft Teams PowerShell Overview

- Microsoft Docs: Install Microsoft Team PowerShell

- Microsoft 365 Developer Blog: Microsoft Graph PowerShell Preview

- Microsoft Tech Community: PowerShell Basics: Don’t Fear Hitting Enter with -WhatIf

- Zipped Script: ReplaceTeamsOwners.zip

“Container,” in this case, is really synonymous with “file folder” for the purposes of this discussion. Placement of a file within a folder arbitrarily possessing the color “blue,” for instance, means that the file itself should also possess the color blue by extension, as shown on the left. All the files in the blue folder are therefore blue themselves.

“Container,” in this case, is really synonymous with “file folder” for the purposes of this discussion. Placement of a file within a folder arbitrarily possessing the color “blue,” for instance, means that the file itself should also possess the color blue by extension, as shown on the left. All the files in the blue folder are therefore blue themselves. Although Windows folders can track metadata or properties in a limited sense, accessing and interacting with that metadata can be hit or miss. For instance, my Camera Roll folder on the right has metadata indicating when it was created. It probably has some additional properties over on the Customize tab (I’m guessing) that may be accessible, but they may be read-only. And if I wanted to add my own arbitrary properties to describe this folder, such as the previous “blue” designation of color, I’d be hard-pressed to do so.

Although Windows folders can track metadata or properties in a limited sense, accessing and interacting with that metadata can be hit or miss. For instance, my Camera Roll folder on the right has metadata indicating when it was created. It probably has some additional properties over on the Customize tab (I’m guessing) that may be accessible, but they may be read-only. And if I wanted to add my own arbitrary properties to describe this folder, such as the previous “blue” designation of color, I’d be hard-pressed to do so.

I’ve been operating under the

I’ve been operating under the  UPS configuration via PowerShell isn’t new territory. There’s a pretty substantial base of online material to draw from for guidance and inspiration (

UPS configuration via PowerShell isn’t new territory. There’s a pretty substantial base of online material to draw from for guidance and inspiration (

Scalability in the hardware and software space is all about

Scalability in the hardware and software space is all about

Here’s another blog post to file in the “I’ll probably never need it, but you never know” bucket of things you’ve seen from me.

Here’s another blog post to file in the “I’ll probably never need it, but you never know” bucket of things you’ve seen from me.