One of the hotly anticipated items in SharePoint 2010’s feature set is the introduction of the Microsoft Office Web Applications, or “Office Web Apps” for short. The release of the Office Web Apps opens up new possibilities for those who work with documents and files that are tied to Microsoft Word and other applications in the Microsoft Office Family.

What Are the Office Web Apps?

In prior versions of SharePoint, viewing and editing Office documents that existed in SharePoint document libraries normally required a client computer possessing the Microsoft Office suite of applications. If you wanted to view or edit a Word document that existed in SharePoint, for example, you needed Microsoft Word (or an equivalent application) installed on your computer.

That situation changes with the arrival of the Office Web Apps. When a SharePoint 2010 farm is properly set up and configured with the Office Web Apps, it becomes possible to view and edit several different Office document types directly from within a browser as shown in Figure 1 below.

Figure 1: Browser-based editing of a Microsoft Word document

The Office Web Apps provide browser-based viewing and editing support for Microsoft Excel, OneNote, PowerPoint, and Word document types, and this support extends to more than just Internet Explorer. Firefox 3.x, Safari 4.x, and Google Chrome browser types are also supported for viewing and editing – making the Office Web Apps an enabler of cross-platform collaboration that centers on Office documents.

A Word about the Plumbing

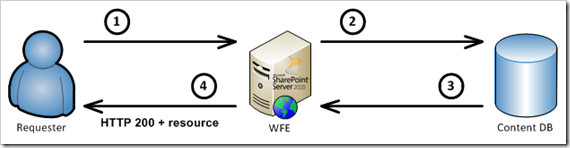

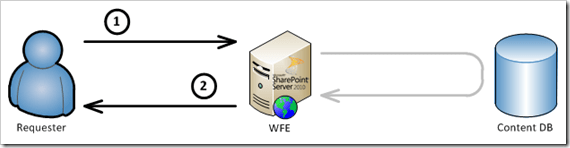

As you might imagine, browser-based rendering and editing of Office documents involves a number of complex processes that engage a variety of front-end, middle-tier, and back-end components. The front-end and middle-tier tasks that are tied to document viewing and editing are handled primarily by a new set of service applications that appear when the Office Web Apps are installed. These service applications (and their associated pages, handlers, and worker processes) take care of the business of document conversion, load-balancing, and rendering for browser consumption.

Document conversion and rendering typically generate a combination of images, HTML, JavaScript, and XAML (or eXtensible Application Markup Language) that are sent to consuming browsers. The creation of these document resources is an expensive process, both in terms of CPU cycles and storage. To improve performance levels, it makes sense to generate these document resources only as needed and reuse them whenever possible. That’s where the Office Web Apps cache comes in.

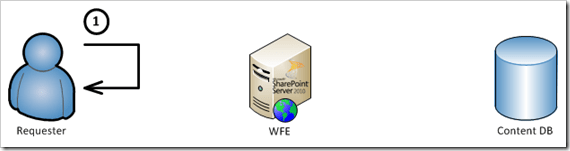

The Office Web Apps cache is the back-end store that is responsible for housing images, HTML, JavaScript, and XAML resources once they have been created for a document. Each time a document is converted into a set of these resources, the resources are stored in the Office Web Apps cache. When a request for a document comes into SharePoint, the cache is checked to see if the document had been previously requested and rendered. If it had, and the cached document resources are up-to-date for the document, then the document request is served from the cache instead of engaging the Office Web Apps to convert and re-render it. Serving document resources from the Office Web Apps cache can yield significant performance improvements over scenarios where no cache is employed.

Quick side note before going too far: the Office Web Apps cache is only employed for Word and PowerPoint document types. It is not used for OneNote or Excel documents.

Inside the Office Web Apps Cache

The Office Web Apps cache takes the form of a single site collection for each Web application within a SharePoint farm. When the Office Web Apps are installed and configured in a SharePoint environment, a couple of new timer jobs are installed and run regularly within the farm. One of those timer jobs, the Office Web Apps Cache Creation timer job, ensures that each Web application where the Office Web Apps are running has a site collection like the one shown below in Figure 2.

Figure 2: The Office_Viewing_Service_Cache site collection

The Office_Viewing_Service_Cache site collection is a standard Team Site, and it is the location where resources are stored following the conversion and rendering of either a Word or PowerPoint document by the Office Web Apps.

The Team Site can be accessed just like any other SharePoint Team Site, and a glimpse inside the All Documents library (showing a number of document resources) appears below in Figure 3.

Figure 3: All Documents library in an Office Web Apps cache site collection

Managing the Cache

For such a complex system, the Office Web Apps components do a pretty good job of maintaining themselves without external intervention. This extends to the site collections that are used by Office Web Apps for caching purposes, as well. For example, the Office Web Apps Expiration timer job that is installed with the Office Web Apps removes old document resources from cache site collections once they’ve hit a certain age. The timer job also ensures that each of the site collections responsible for caching has adequate space to serve its purpose.

This doesn’t mean that there aren’t opportunities for tuning and maintenance, though. In fact, there are a couple of things that every administrator should do and review when it comes to the Office Web Apps cache.

Tip #1: Relocate the Cache to a New Database

By default, the Office Web Apps Cache Creation timer job creates an Office_Viewing_Service_Cache site collection in a content database that is collocated with one or more of the “real” site collections within each of your content Web applications. Since the cache site collection is allowed to grow to a beefy 100GB by default, it makes sense to relocate the cache site collection to its own (new) content database. By relocating the cache site collection to its own content database, it becomes easy to exclude it from other maintenance such as backups.

Relocating the cache site collection is pretty straightforward, and it can be accomplished pretty easily with following RelocateOwaCache.ps1 PowerShell script. Simply save the script, execute it, and supply the URL of a Web application within your farm where the Office Web Apps are running. The script will take care of creating a new content database within the Web application, and it will then move the Web application’s Office Web Apps cache site collection to the newly created content database.

[code language=”powershell”]

<#

.SYNOPSIS

RelocateOwaCache.ps1

.DESCRIPTION

Relocates the Office Web Apps cache for a specified Web application to a new content database that is created by the script

.NOTES

Author: Sean McDonough

Last Revision: 07-June-2011

.PARAMETER targetUrl

A Web application where Office Web Apps are in use

.EXAMPLE

RelocateOwaCache.ps1 http://www.TargetWebApplication.com

#>

param

(

[string]$targetUrl = "$(Read-Host ‘Target Web application URL [e.g. http://hostname]’)"

)

function RelocateCache($targetUrl)

{

# Ensure that the SharePoint cmdlets are loaded before continuing

$spCmdlets = Get-PSSnapin Microsoft.SharePoint.PowerShell -ErrorAction silentlycontinue

if ($spCmdlets -eq $Null)

{ Add-PSSnapin Microsoft.SharePoint.PowerShell }

# Get the name of the current database where the cache is located; it

# will serve as the basis for a new content database name.

$cacheSite = Get-SPOfficeWebAppsCache -WebApplication $targetUrl -ErrorAction stop

$newDbName = $cacheSite.ContentDatabase.Name + "_OWACache"

# Create a new content database and relocate the cache to it. Make sure the

# user knows what’s happening each step of the way.

Write-Host "- creating a new content database …"

$cacheDb = New-SPContentDatabase -Name $newDbName -WebApplication $targetUrl -ErrorAction stop

Write-Host "- moving the Office Web Apps cache …"

Move-SPSite $cacheSite -DestinationDatabase $cacheDb -Confirm:$false -ErrorAction stop

Write-Host "- performing required IISRESET …"

iisreset | Out-Null

# Let the user know where the cache is now located

Write-Host "Cache successfully relocated to the ‘$newDbName’ database."

# Abort script processing in the event an exception occurs.

trap

{

Write-Warning "`n*** Script execution aborting. See below for problem encountered during execution. ***"

$_.Message

break

}

}

# Launch script

RelocateCache $targetUrl

[/code]

Tip #2: Review Size and Expiration Settings

When an Office_Viewing_Service_Cache site collection is provisioned within a Web application by the Office Web Apps Cache Creation timer job, it is initially configured to hold cached document resources for 30 days. As mentioned in Tip #1, a cache site collection can also grow to a maximum of 100GB by default.

Whether or not these default settings are appropriate for a Web application depends primarily upon the nature of the site collections housed within the Web application. When site collections contain primarily static documents or content that changes infrequently, it makes sense to allow the cache to grow larger and expire content less often than normal. This maximizes the benefit obtained from caching since document content turns over less frequently.

On the other hand, site collections that experience frequent document turnover and heavy collaboration traffic tend to benefit very little from large cache sizes and long expiration periods. In site collections of this nature, cached content tends to become stale quickly. Little benefit is derived from holding onto document resources that may only be good for days or even hours, so maximum cache size is reduced and expiration periods are shortened.

Tip #3: Give Yourself Some Warning

Since each Office Web App cache is a Team Site and like any other site collection, you can leverage standard SharePoint site collection features and capabilities to help you out. One such mechanism that can be of assistance is the ability to have an e-mail warning sent to site collection owners once a site collection’s size hits a predefined threshold. In the case of the Office Web Apps cache, such a warning could be a cue to increase the maximum size of the cache site collection or perhaps lower the expiration period for document resources housed within the site collection.

Like the maximum cache size setting described in Tip #2, the ability to send e-mail warnings once the cache reaches a threshold is actually tied to SharePoint’s site collection quota capabilities. The maximum size of the cache site collection is handled as a storage quota, and the warning threshold maps directly to the quota’s warning threshold as shown below in Figure 4. In the case of Figure 4, a maximum cache size of 50GB is in effect for the cache site collection, and the e-mail warning threshold is set for 25GB.

Figure 4: Quota settings for an Office Web Apps cache site collection

Knobs and Dials

Tips #2 and #3 discussed some of the more straightforward Office Web Apps cache settings that are available to you, but you might be wondering how you actually go about changing them.

The AdjustOwaCache.ps1 PowerShell script that appears below provides you with an easy way to review and change the settings discussed. Simply save the script, execute it, and supply the URL of the Web application containing the Office Web Apps cache you’d like to adjust. The script will show you the cache’s current settings and give you the opportunity to modify them.

[code language=”powershell”]

<#

.SYNOPSIS

AdjustOwaCache.ps1

.DESCRIPTION

Dumps several common OWA cache settings to the console for a selected Web application and provides a mechanism for altering the those values

.NOTES

Author: Sean McDonough

Last Revision: 08-June-2011

.PARAMETER targetUrl

A Web application where Office Web Apps are in use

.EXAMPLE

AdjustOwaCache.ps1 http://www.TargetWebApplication.com

#>

param

(

[string]$targetUrl = "$(Read-Host ‘Target Web application URL [e.g. http://hostname]’)"

)

function AdjustCache($targetUrl)

{

# Ensure that the SharePoint cmdlets are loaded before continuing

$spCmdlets = Get-PSSnapin Microsoft.SharePoint.PowerShell -ErrorAction silentlycontinue

if ($spCmdlets -eq $Null)

{ Add-PSSnapin Microsoft.SharePoint.PowerShell }

# Create an easy converter for GB to bytes

$GBtoBytes = 1024 * 1024 * 1024

# Get a reference to the cache site collection and extract the values we’ll be

# working with and (potentially) altering.

$cacheSite = Get-SPOfficeWebAppsCache -WebApplication $targetUrl -ErrorAction stop

$wacSize = $cacheSite.Quota.StorageMaximumLevel / $GBtoBytes

$wacWarn = $cacheSite.Quota.StorageWarningLevel / $GBtoBytes

$wacExpire = 30

if ($cacheSite.RootWeb.Properties.ContainsKey("waccacheexpirationperiod"))

{ $wacExpire = $cacheSite.RootWeb.Properties["waccacheexpirationperiod"] }

Write-Host "Current OWA cache values for ‘$targetUrl’"

Write-Host "- Maximum Cache Size (GB): $wacSize"

Write-Host "- Warning Threshold (GB): $wacWarn"

Write-Host "- Expiration Period (Days): $wacExpire"

# Give the user the option to make changes.

$yesOrNo = Read-Host "Would you like to change one or more values? [y/n]"

if ($yesOrNo -eq "y")

{

[Int64]$newWacSize = Read-Host "- Maximum Cache Size (GB)"

Write-Host "- Warning Threshold (GB)"

[Int64]$newWacWarn = Read-Host " (supply 0 for no warning)"

[int]$newWacExpire = Read-Host "- Expiration Period (Days)"

# Convert GB values to bytes and set the cache

$newWacSize = ($newWacSize * $GBtoBytes)

$newWacWarn = ($newWacWarn * $GBtoBytes)

Set-SPOfficeWebAppsCache -WebApplication $targetUrl -ExpirationPeriodInDays $newWacExpire -MaxSizeInBytes $newWacSize -WarningSizeInBytes $newWacWarn -ErrorAction stop

}

# Abort script processing in the event an exception occurs.

trap

{

Write-Warning "`n*** Script execution aborting. See below for problem encountered during execution. ***"

$_.Message

break

}

}

# Launch script

AdjustCache $targetUrl

[/code]

Conclusion

The Office Web Apps are a powerful addition to SharePoint 2010 and pave the way for greater collaboration on Office documents without the need for the Microsoft Office suite of client applications. The Office Web Apps cache is an important part of the larger Office Web Apps equation, and the cache is generally pretty good about taking care of itself. As shown in this article, though, it is still a good idea to relocate the cache from its default location. At the same time, a little bit of tuning and e-mail alerting can go a long way towards ensuring that the cache operates optimally for you in your environment.

Scalability in the hardware and software space is all about

Scalability in the hardware and software space is all about

Here’s another blog post to file in the “I’ll probably never need it, but you never know” bucket of things you’ve seen from me.

Here’s another blog post to file in the “I’ll probably never need it, but you never know” bucket of things you’ve seen from me.

This post is my way of doing something akin to a SharePoint public service announcement. I’ve recently seen some caching-related functionality and topics – especially the BLOB Cache – getting some real traction in different circles, and I think that the attention and love is generally a good thing. I am somewhat concerned, though, by the fact that the discussions and projects that have been surfacing don’t seem to say much beyond the Post-It on the right.

This post is my way of doing something akin to a SharePoint public service announcement. I’ve recently seen some caching-related functionality and topics – especially the BLOB Cache – getting some real traction in different circles, and I think that the attention and love is generally a good thing. I am somewhat concerned, though, by the fact that the discussions and projects that have been surfacing don’t seem to say much beyond the Post-It on the right.

I hope the example featuring Wiley did an adequate job of explaining why I think that blindly turning on the BLOB Cache can be a bad thing for end users. Having seen first-hand what an improperly configured BLOB Cache can do to the user experience, I’d like to offer up a handful of suggestions based on my own experience.

I hope the example featuring Wiley did an adequate job of explaining why I think that blindly turning on the BLOB Cache can be a bad thing for end users. Having seen first-hand what an improperly configured BLOB Cache can do to the user experience, I’d like to offer up a handful of suggestions based on my own experience.