Several months ago, I decided that a rebuild of my primary MOSS environment here at home was in order. My farm consisted of a couple of Windows Server 2003 R2 VMs (one WFE, one app server) that were backed by a non-virtualized SQL Server. I wanted to free up some cycles on my Hyper-V boxes, and I had an “open physical box” … so, I elected to rebuild my farm on a single, non-virtualized box running (the then newly released) Windows Server 2008 R2.

The rebuild went relatively smoothly, and bringing my content databases over from the old farm posed no particular problems. Everything was good.

The Situation

Fast forward to just a few weeks ago.

One of the site collections in my farm is used to store and share pictures that we take of our kids. The site collection is, in effect, a huge multimedia repository …

… and allow me a moment to address the concerns of the savvy architects and administrators out there. I do understand SharePoint BLOB (binary large object) storage and the implications (and potential effects) that large multimedia libraries can have on scalability. I wouldn’t recommend what I’m doing to most clients – at least not until remote BLOB storage (RBS) gets here with SharePoint 2010. Remember, though, that my wife and I are just two people – not a company of hundreds or thousands. The benefits of centralized, tagged, searchable, nicely presented content outweigh scalability and performance concerns for us.

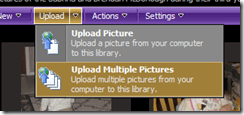

Back to the pictures site. I was getting set to upload a batch of pictures, so I did what I always do: I went into the Upload menu of the target pictures library in the site collection and selected Upload Multiple Pictures as shown on the right. For those who happen to have Microsoft Office 2007 installed (as I do), this action normally results in the Microsoft Office Picture Manager getting launched as shown below.

Back to the pictures site. I was getting set to upload a batch of pictures, so I did what I always do: I went into the Upload menu of the target pictures library in the site collection and selected Upload Multiple Pictures as shown on the right. For those who happen to have Microsoft Office 2007 installed (as I do), this action normally results in the Microsoft Office Picture Manager getting launched as shown below.

From within the Microsoft Office Picture Manager, uploading pictures is simply a matter of navigating to the folder containing the pictures, selecting the ones that are to be pushed into SharePoint, and pressing the Upload and Close button. From there, the application itself takes care of rounding up the pictures that have been selected and getting them into the picture library within SharePoint. SharePoint pops up a page that provides a handy “Go back to …” link that can then be used to navigate back to the library for viewing and working with the newly uploaded pictures.

From within the Microsoft Office Picture Manager, uploading pictures is simply a matter of navigating to the folder containing the pictures, selecting the ones that are to be pushed into SharePoint, and pressing the Upload and Close button. From there, the application itself takes care of rounding up the pictures that have been selected and getting them into the picture library within SharePoint. SharePoint pops up a page that provides a handy “Go back to …” link that can then be used to navigate back to the library for viewing and working with the newly uploaded pictures.

Upon selecting the Upload Multiple Pictures menu item, SharePoint navigated to the infopage.aspx page shown above. I waited, and waited … but the Microsoft Office Picture Manager never launched. I hit my browser’s back button, and tried the operation again. Same result: no Picture Manager.

Trouble In River City

Picture Manager’s failure to launch was obviously a concern, and I wanted to know why I was encountering problems … but more than anything, I simply wanted to get my pictures uploaded and tagged. My wife had been snapping pictures of our kids left and right, and I had 131 JPEG files waiting for me to do something.

Picture Manager’s failure to launch was obviously a concern, and I wanted to know why I was encountering problems … but more than anything, I simply wanted to get my pictures uploaded and tagged. My wife had been snapping pictures of our kids left and right, and I had 131 JPEG files waiting for me to do something.

I figured that there was more than one way to skin a cat, so I initiated my backup plan: Explorer View. If you aren’t familiar with SharePoint’s Explorer View, then you need not look any further than the name to understand what it is and how it operates. By opening the Actions menu of a library (such as a Document Library or Picture Library) and selecting the Open with Windows Explorer menu item as shown on the right, a Windows Explorer window is opened to the library. The contents of the library can then be examined and manipulated using a file system paradigm – even though SharePoint libraries are not based in (or housed in) any physical file system.

The mechanisms through which the Explorer View are prepared, delivered, and rendered are really quite impressive from a technical perspective. I’m not going to go into the details, but if you want to learn more about them, I highly recommend a whitepaper that was authored by Steve Sheppard. Steve is an escalation engineer with Microsoft who I’ve worked with in the past, and his knowledge and attention to detail are second to none – and those qualities really come through in the whitepaper.

Unfortunately for me, though, my attempts to open the picture library in Explorer View also led nowhere. Simply put, nothing happened. I tried the Open with Windows Explorer option several times, and I was greeted with no action, error, or visible sign that anything was going on.

SharePoint and WebDAV

I was 0 for 2 on my attempts to get at the picture library for uploading. I wasn’t sure what was going on, but I was pretty sure that WebDAV (Web Distributed Authoring and Versioning) was mixed-up in the behavior I was seeing. WebDAV is implemented by SharePoint and typically employed to provide the Explorer View operations it supports. I was under the impression that the Microsoft Office Picture Manager leveraged WebDAV to provide some or all of its upload capabilities, too.

After a few moments of consideration, the notion that WebDAV might be involved wasn’t a tremendous mental leap. In rebuilding my farm on Windows Server 2008 R2, I had moved from Internet Information Services (IIS) version 6 (in Windows Server 2003 R2) to IIS7. WebDAV is different in IIS7 versus previous versions … I just hadn’t heard about SharePoint WebDAV-based functions operating any differently.

Playing a Client-Side Tune

My gut instincts regarding WebDAV hardly qualified as “objective troubleshooting information,” so I fired-up Fiddler2 to get a look at what was happening between my web browser and the rebuilt SharePoint farm. When I attempted to execute an Open with Windows Explorer against the picture library, I was greeted with a bunch of HTTP 405 errors.

405 ?!?!

To be completely honest, I’d never actually seen an HTTP 405 status code before. It was obviously an error (since it was in the 400-series), but beyond that, I wasn’t sure. A couple of minutes of digging through the W3C’s status code definitions, though, revealed that a 405 status code is returned whenever a requested method or verb isn’t supported.

I dug a little deeper and compared the request headers my browser had sent with the response headers I’d received from SharePoint. Doing that spelled-out the problem pretty clearly.

Here’s an example of one of the HTTP headers that was sent:

[sourcecode language=”text”]

PROPFIND /pictures/twins3/2009-12-07_no.3 HTTP/1.1

[/sourcecode]

… and here’s the relevant portion of the response that the SharePoint server sent back:

[sourcecode language=”text”]

HTTP/1.1 405 Method Not Allowed

Allow: GET, HEAD, OPTIONS, TRACE

[/sourcecode]

PROPFIND was the method that my browser was passing to SharePoint, and the request was failing because the server didn’t include the PROPFIND verb in its list of supported methods as stated in the Allow: portion of the response. PROPFIND was further evidence that WebDAV was in the mix, too, given its limited usage scenarios (and since the bulk of browser web requests employ either the GET or POST verb).

So what was going on? The operations I was attempting had worked without issue under II6 and Windows Server 2003 R2, and I was pretty confident that I hadn’t seen any issues with other Windows Server 2008 (R2 and non-R2) farms running IIS7. I’d either botched something on my farm rebuild or run into an esoteric problem of some sort; experience (and common sense) pointed to the former.

Doing Some Legwork

I turned to the Internet to see if anyone else had encountered HTTP 405 errors with SharePoint and WebDAV. Though I quickly found a number of posts, questions, and other information that seemed related to my situation, none of it really described my particular scenario or what I was seeing.

After some additional searching, I eventually came across a discussion on the MSDN forums that revolved around whether or not WebDAV should be enabled within IIS for servers that serve-up SharePoint content. The back and forth was a bit disjointed, but my relevant take-away was that enabling WebDAV within IIS7 seemed to cause problems for SharePoint.

I decided to have a look at the server housing my rebuilt farm to see if I had enabled the WebDAV Publishing role service. I didn’t think I had, but I needed to check. I opened up the Server Manager applet and had a look at Role Services that were enabled for the Web Server (IIS). The results are shown in the image on right; apparently, I had enabled WebDAV Publishing. My guess is that I did it because I thought it would be a good idea, but it was starting to look like a pretty bad idea all around.

I decided to have a look at the server housing my rebuilt farm to see if I had enabled the WebDAV Publishing role service. I didn’t think I had, but I needed to check. I opened up the Server Manager applet and had a look at Role Services that were enabled for the Web Server (IIS). The results are shown in the image on right; apparently, I had enabled WebDAV Publishing. My guess is that I did it because I thought it would be a good idea, but it was starting to look like a pretty bad idea all around.

The Test

I was tempted to simply remove the WebDAV Publishing role service and cross my fingers, but instead of messing with my live “production” farm, I decided to play it safe and study the effects of enabling and disabling WebDAV Publishing in a controlled environment. I fired up a VM that more-or-less matched my production box (Windows Server 2008 R2, 64-bit, same Windows and SharePoint patch levels) to play around.

When I fired-up the VM, a quick check of the enabled role services for IIS showed that WebDAV Publishing was not enabled – further proof that I got a bit overzealous in enabling role services on my rebuilt farm. I quickly went into the VM’s SharePoint Central Administration site and created a new web application (http://spsdev:18480). Within the web application, I created a team site called Sample Team Site. Within that team site, I then created a picture library called Sample Picture Library for testing.

When It Works (without the WebDAV Publishing Role Service)

I fired up Fiddler2 in the VM, opened Internet Explorer 8, navigated to the Sample Picture Library, and attempted to execute an Open with Windows Explorer operation. Windows Explorer opened right up, so I knew that things were working as they should within the VM. The pertinent capture for the exchange between Internet Explorer and SharePoint (from Fiddler2) appears below.

Reviewing the dialog between client and server, there appeared to be two distinct “stages” in this sequence. The first stage was an HTTP request that was made to the root of the site collection using the OPTIONS method, and the entire HTTP request looked like this:

[sourcecode language=”text”]

OPTIONS / HTTP/1.1

Cookie: MSOWebPartPage_AnonymousAccessCookie=18480

User-Agent: Microsoft-WebDAV-MiniRedir/6.1.7600

translate: f

Connection: Keep-Alive

Host: spdev:18480

[/sourcecode]

In response to the request, the SharePoint server passed back an HTTP 200 status that looked similar to the block that appears below. Note the permitted methods/verbs (as Allow:) that the server said it would accept, and that the PROPFIND verb appeared within the list:

[sourcecode language=”text”]

HTTP/1.1 200 OK

Cache-Control: private,max-age=0

Allow: GET, POST, OPTIONS, HEAD, MKCOL, PUT, PROPFIND, PROPPATCH, DELETE, MOVE, COPY, GETLIB, LOCK, UNLOCK

Content-Length: 0

Accept-Ranges: none

Server: Microsoft-IIS/7.5

MS-Author-Via: MS-FP/4.0,DAV

X-MSDAVEXT: 1

DocumentManagementServer: Properties Schema;Source Control;Version History;

DAV: 1,2

Exires: Sun, 06 Dec 2009 21:13:27 GMT

Public-Extension: http://schemas.microsoft.com/repl-2

Set-Cookie: WSS_KeepSessionAuthenticated=18480; path=/

Persistent-Auth: true

X-Powered-By: ASP.NET

MicrosoftSharePointTeamServices: 12.0.0.6510

Date: Mon, 21 Dec 2009 21:13:27 GMT

[/sourcecode]

After this initial request and associated response, all subsequent requests (“stage 2”) were made using the PROPFIND verb and have a structure that was similar to the following:

[sourcecode language=”text”]

PROPFIND /Sample%20Picture%20Library HTTP/1.1

User-Agent: Microsoft-WebDAV-MiniRedir/6.1.7600

Depth: 0

translate: f

Connection: Keep-Alive

Content-Length: 0

Host: spdev:18480

Cookie: MSOWebPartPage_AnonymousAccessCookie=18480; WSS_KeepSessionAuthenticated=18480

[/sourcecode]

Each of the requests returned a 207 HTTP status (WebDAV multi-status response) and some WebDAV data within an XML document (slightly modified for readability).

[sourcecode language=”text”]

HTTP/1.1 207 MULTI-STATUS

Cache-Control: no-cache

Content-Length: 1132

Content-Type: text/xml

Server: Microsoft-IIS/7.5

Public-Extension: http://schemas.microsoft.com/repl-2

Set-Cookie: WSS_KeepSessionAuthenticated=18480; path=/

Persistent-Auth: true

X-Powered-By: ASP.NET

MicrosoftSharePointTeamServices: 12.0.0.6510

Date: Mon, 21 Dec 2009 21:13:27 GMT

<?xml version="1.0" encoding="utf-8" ?><D:multistatus xmlns:D="DAV:" xmlns:Office="urn:schemas-microsoft-com:office:office" xmlns:Repl="http://schemas.microsoft.com/repl/" xmlns:Z="urn:schemas-microsoft-com:">

<D:response><D:href>http://spdev:18480/Sample Picture Library</D:href><D:propstat>

…

</D:propstat>

</D:response>

</D:multistatus>

[/sourcecode]

It was these PROPFIND requests (or rather, the 207 responses to the PROPFIND requests) that gave the client-side WebClient (directed by Internet Explorer) the information it needed to determine what was in the picture library, operations that were supported by the library, etc.

When It Doesn’t Work (i.e., WebDAV Publishing Enabled)

When the WebDAV Publishing role service was enabled within IIS7, the very same request (to open the picture library in Explorer View) yielded a very different series of exchanges (again, captured within Fiddler2):

The initial OPTIONS request returned an HTTP 200 status that was identical to the one previously shown, and it even included the PROPFIND verb amongst its list of accepted methods:

[sourcecode language=”text”]

HTTP/1.1 200 OK

Cache-Control: private,max-age=0

Allow: GET, POST, OPTIONS, HEAD, MKCOL, PUT, PROPFIND, PROPPATCH, DELETE, MOVE, COPY, GETLIB, LOCK, UNLOCK

Content-Length: 0

Accept-Ranges: none

Server: Microsoft-IIS/7.5

MS-Author-Via: MS-FP/4.0,DAV

X-MSDAVEXT: 1

DocumentManagementServer: Properties Schema;Source Control;Version History;

DAV: 1,2

Exires: Sun, 06 Dec 2009 22:04:31 GMT

Public-Extension: http://schemas.microsoft.com/repl-2

Set-Cookie: WSS_KeepSessionAuthenticated=18480; path=/

Persistent-Auth: true

X-Powered-By: ASP.NET

MicrosoftSharePointTeamServices: 12.0.0.6510

Date: Mon, 21 Dec 2009 22:04:31 GMT

[/sourcecode]

Even though the PROPFIND verb was supposedly permitted, though, subsequent requests resulted in an HTTP 405 status and failure:

[sourcecode language=”text”]

HTTP/1.1 405 Method Not Allowed

Allow: GET, HEAD, OPTIONS, TRACE

Server: Microsoft-IIS/7.5

Persistent-Auth: true

X-Powered-By: ASP.NET

MicrosoftSharePointTeamServices: 12.0.0.6510

Date: Mon, 21 Dec 2009 22:04:31 GMT

Content-Length: 0

[/sourcecode]

Unfortunately, these behind-the-scenes failures didn’t seem to generate any noticeable error or message in client browsers. While testing (locally) in the VM environment, I was at least prompted to authenticate and eventually shown a form of “unsupported” error message. While connecting (remotely) to my production environment, though, the failure was silent. Only Fiddler2 told me what was really occurring.

The Solution

The solution to this issue, it seems, is to ensure that the WebDAV Publishing role service is not installed on WFEs serving up SharePoint content in Windows Server 2008 / IIS7 environments. The mechanism by which SharePoint 2007 handles WebDAV requests is still something of a mystery to me, but it doesn’t appear to involve the IIS7-based WebDAV Publishing role service at all.

The solution to this issue, it seems, is to ensure that the WebDAV Publishing role service is not installed on WFEs serving up SharePoint content in Windows Server 2008 / IIS7 environments. The mechanism by which SharePoint 2007 handles WebDAV requests is still something of a mystery to me, but it doesn’t appear to involve the IIS7-based WebDAV Publishing role service at all.

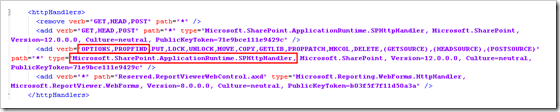

Steve Sheppard’s troubleshooting whitepaper (introduced earlier) mentions that enabling or disabling the WebDAV functionality supplied by IIS6 (under Windows Server 2003) has no appreciable effect on SharePoint operation. Steve even mentions that SharePoint’s internal WebDAV implementation is provided by an ISAPI filter that is housed in Stsfilt.dll. Though this was true in WSSv2 and SharePoint Portal Server 2003 (the platforms addressed by Steve’s whitepaper), it’s no longer the case with SharePoint 2007 (WSSv3 and MOSS 2007). The OPTIONS and PROPFIND verbs are mapped to the Microsoft.SharePoint.ApplicationRuntime.SPHttpHandler type in SharePoint web.config files (see below) – Stsfilt.dll library doesn’t even appear anywhere within the file system of MOSS servers (or at least in mine).

Regardless of how it is implemented, the fact that the two verbs of interest (OPTIONS and PROPFIND) are mapped to a SharePoint type indicates that WebDAV functionality is still handled privately within SharePoint for its own purposes. When the WebDAV Publishing role is enabled in IIS7, IIS7 takes over (or at least takes precedence for) PROPFIND requests … and that’s where things appear to break.

To Sum Up

After toggling the WebDAV Publishing role service on and off a couple of times in my VM, I became convinced that my production environment would start behaving the way I wanted it to if I simply disabled IIS7’s WebDAV Publishing functionality. I uninstalled the WebDAV Publishing role service, and both Microsoft Office Picture Manager and Explorer View started behaving again.

I also made a note to myself to avoid installing role services I thought I might need before I actually needed them :-)

Additional Reading and References

- Blog Post: Todd Klindt, “Installing Remote Blob Store (RBS) on SharePoint 2010

- Whitepaper: Understanding and Troubleshooting the SharePoint Explorer View

- Blog: Steve Sheppard

- Microsoft TechNet: About WebDAV

- IIS.NET: WebDAV Extension

- Tool: Fiddler2

- W3C: HTTP/1.1 Status Code Definitions

- MSDN: Client Request Using PROPFIND

- TechNet Forums: IIS WebDAV service required for SharePoint webdav?

- Site: HTTP Extensions for Distributed Authoring

As always, SharePoint Saturday events are free and open to the public. If you have any interest in learning more about SharePoint, getting some free training, or simply networking and meeting other professionals in the SharePoint space, please sign up!

As always, SharePoint Saturday events are free and open to the public. If you have any interest in learning more about SharePoint, getting some free training, or simply networking and meeting other professionals in the SharePoint space, please sign up!