Whenever I’m speaking to other technology professionals about what I do for a living, there’s always a decent chance that the topic of my home network will come up. This seems to be particularly true when talking with up-and-coming technologists, as I’m commonly asked by them how I managed to get from “Point A” (having transitioned into IT from my previous life as a polymer chemist) to “Point B” (consulting as a SharePoint architect).

I thought it would be fun (and perhaps informative) to share some information, pictures, and other geek tidbits on the thing that seems to consume so much of my “free time.” This post also allows me to make good on the promise I made to a few people to finally put something online for them to see.

Wait … “Basement Datacenter?”

For those on Twitter who may have seen my occasional use of the hashtag #BasementDatacenter: I can’t claim to have originated the term, though I fully embrace it these days. The first time I heard the term was when I was having one of the aforementioned “home network” conversations with a friend of mine, Jason Ditzel. Jason is a Principal Consultant with Microsoft, and we were working together on a SharePoint project for a client a couple of years back. He was describing his love for his recently acquired Windows Home Server (WHS) and how I should have a look at the product. I described why WHS probably wouldn’t fit into my network, and that led Jason to comment that Microsoft would have to start selling “Basement Datacenter Editions” of its products. The term stuck.

So, What Does It Look Like?

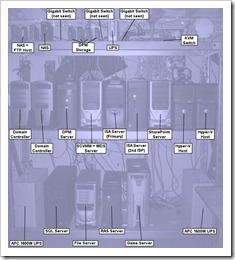

Two pictures appear on the right. The left-most shot is a picture of my server shelves from the front. Each of the computing-related items in the picture is labeled in the right-most shot. There are obviously other things in the pictures, but I tried to call out the items that might be of some interest or importance to my fellow geeks.

Two pictures appear on the right. The left-most shot is a picture of my server shelves from the front. Each of the computing-related items in the picture is labeled in the right-most shot. There are obviously other things in the pictures, but I tried to call out the items that might be of some interest or importance to my fellow geeks.

Generally speaking, things look relatively tidy from the front. Of course, I can’t claim to have the same degree of organization in the back. The shot on the left displays how things look behind and to the right of the shots that were taken above. All of the power, network, and KVM cabling runs are in the back … and it’s messy. I originally had things nicely organized with cables of the proper length, zip ties, and other aids. Unfortunately, servers and equipment shift around enough that the organization system wasn’t sustainable.

Generally speaking, things look relatively tidy from the front. Of course, I can’t claim to have the same degree of organization in the back. The shot on the left displays how things look behind and to the right of the shots that were taken above. All of the power, network, and KVM cabling runs are in the back … and it’s messy. I originally had things nicely organized with cables of the proper length, zip ties, and other aids. Unfortunately, servers and equipment shift around enough that the organization system wasn’t sustainable.

While doing the network planning and subsequent setup, I’m happy that I at least had the foresight to leave myself ample room to move around behind the shelves. If I hadn’t, my life would be considerably more difficult.

On the topic of shelves: if you ever find yourself in need of extremely heavy duty, durable industrial shelves, I highly recommend this set of shelves from Gorilla Rack. They’re pretty darn heavy, but they’ll accept just about any amount of weight you want to put on them.

I had to include the shot below to give you a sense of the “ambiance.”

Anyone who’s been to my basement (which I lovingly refer to as “the bunker”) knows that I have a thing for dim but colorful lighting. I normally illuminate my basement area with Christmas lights, colored light bulbs, etc. Frankly, things in the basement are entirely too ugly (and dusty) to be viewed under normal lighting. It may be tough to see from this shot, but the servers themselves contribute some light of their own.

Why On Earth Do You Have So Many Servers?

After seeing my arrangement, the most common question I get is “why?” It’s actually an easy one to answer, but to do so requires rewinding a bit.

Many years ago, when I was a “young and hungry” developer, I was trying to build a skill set that would allow me to work in the enterprise – or at least on something bigger than a single desktop. Networking was relatively new to me, as was the notion of servers and server-side computing. The web had only been visual for a while (anyone remember text-based surfing? Quite a different experience …), HTML 3 was the rage, Microsoft was trying to get traction with ASP, ActiveX was the cool thing to talk about (or so we thought), etc.

It was around that time that I set up my first Windows NT4 server. I did so on the only hardware I had leftover from my first Pentium purchase – a humble 486 desktop. I eventually got the server running, and I remember it being quite a challenge. Remember: Google and “answers at your fingertips” weren’t available a decade or more ago. Servers and networking also weren’t as forgiving and self-correcting as they are nowadays. I learned a awful lot while troubleshooting and working on that server.

Before long, though, I wanted to learn more than was possible on a single box. I wanted to learn about Windows domains, I wanted to figure out how proxies and firewalls worked (anyone remember Proxy Server 2.0?), and I wanted to start hosting online Unreal Tournament and Half Life games for my friends. With everything new I learned, I seemed to pick up some additional hardware.

When I moved out of my old apartment and into the house that my wife and I now have, I was given the bulk of the basement for my “stuff.” My network came with me during the move, and shortly after moving in I re-architected it. The arrangement changed, and of course I ended up adding more equipment.

Fast-forward to now. At this point in time, I actually have more equipment than I want. When I was younger and single, maintaining my network was a lot of fun. Now that I have a wife, kids, and a great deal more responsibility both in and out of work, I’ve been trying to re-engineer things to improve reliability, reduce size, and keep maintenance costs (both time and money) down.

I can’t complain too loudly, though. Without all of this equipment, I wouldn’t be where I’m at professionally. Reading about Windows Server, networking, SharePoint, SQL Server, firewalls, etc., has been important for me, but what I’ve gained from reading pales in comparison to what I’ve learned by *doing*.

How Is It All Setup?

I actually have documentation for most of what you see (ask my Cardinal SharePoint team), but I’m not going to share that here. I will, however, mention a handful of bullets that give you an idea of what’s running and how it’s configured.

- I’m running a Windows 2008 domain (recently upgraded from Windows 2003)

- With only a couple of exceptions, all the computers in the house are domain members

- I have redundant ISP connections (DSL and BPL) with static IP addresses so I can do things like my own DNS resolution

- My primary internal network is gigabit Ethernet; I also have two 802.11g access points

- All my equipment is UPS protected because I used to lose a lot of equipment to power irregularities and brown-outs.

- I believe in redundancy. Everything is backed-up with Microsoft Data Protection Manager, and in some cases I even have redundant backups (e.g., with SharePoint data).

There’s certainly a lot more I could cover, but I don’t want to turn this post into more of a document than I’ve already made it.

Fun And Random Facts

Some of these are configuration related, some are just tidbits I feel like sharing. All are probably fleeting, as my configuration and setup are constantly in flux:

Beefiest Server: My SQL Server, a Dell T410 with quad-core Xeon and about 4TB worth of drives (in a couple of RAID configurations)

Wimpiest Server: I’ve got some straggling Pentium 3, 1.13GHz, 512MB RAM systems. I’m working hard to phase them out as they’re of little use beyond basic functions these days.

Preferred Vendor: Dell. I’ve heard plenty of stories from folks who don’t like Dell, but quite honestly, I’ve had very good luck with them over the years. About half of my boxes are Dell, and that’s probably where I’ll continue to shop.

Uptime During Power Failure: With my oversize UPS units, I’m actually good for about an hour’s worth of uptime across my whole network during a power failure. Of course, I have to start shutting down well before that (to ensure graceful power-off).

Most Common Hardware Failure: Without a doubt, I lose power supplies far more often than any other component. I think that’s due in part to the age of my machines, the fact that I haven’t always bought the best equipment, and a couple of other factors. When a machine goes down these days, the first thing I test and/or swap out is a power supply. I keep at least a couple spares on-hand at all times.

Backup Storage: I have a ridiculous amount of drive space allocated to backups. My DPM box alone has 5TB worth of dedicated backup storage, and many of my other boxes have additional internal drives that are used as local backup targets.

Server Paraphernalia: Okay, so you may have noticed all the “junk” on top of the servers. Trinkets tend to accumulate there. I’ve got a set of Matrix characters (Mr. Smith and Neo), a PIP boy (of Fallout fame), Cheshire Cat and Alice (from American McGee’s Alice game), a Warhammer mech (one of the Battletech originals), a “cat in the bag” (don’t ask), a multimeter, and other assorted stuff.

Cost Of Operation: I couldn’t begin to tell you, though my electric bill is ridiculous (last month’s was about $400). Honestly, I don’t want to try to calculate it for fear of the result inducing some severe depression.

Where Is It All Going?

As I mentioned, I’m actively looking for ways to get my time and financial costs down. I simply don’t have the same sort of time I used to have.

Given rising storage capacities and processor capabilities, it probably comes as no surprise to hear me say that I’ve started turning towards virtualization. I have two servers that act as dedicated Hyper-V hosts, and I fully expect the trend to continue.

Here are a few additional plans I have for the not-so-distant future:

- I just purchased a Dell T110 that I’ll be configuring as a Microsoft Forefront Threat Management Gateway 2010 (TMG) server. I currently have two Internet Security and Acceleration Server 2006 servers (one for each of my ISP connections) and a third Windows Server 2008 for SSL VPN connectivity. I can get rid of all three boxes with the feature set supplied by one TMG server. I can also dump some static routing rules and confusing firewall configuration in the process. That’s hard to beat.

- I’m going to see about virtualizing my two domain controllers (DCs) over the course of the year. Even though the machines are backed-up, the hardware is near the end of its usable life. Something is eventually going to fail that I can’t replace. By virtualizing the DCs, I gain a lot of flexibility (I can move them around on physical hardware) and can get rid of two more physical boxes. Box reduction is the name of the game these days! I’ll probably build a new (virtual) DC on Windows Server 2008 R2; migrate FSMO roles, DNS, and DHCP responsibilities to it; and then phase out the physical DCs – rather than try a P2V move.

- With SharePoint Server 2010 coming, I’m going to need to get some even beefier server hardware. I’m learning and working just fine with the aid of desktop virtualization right now (my desktop is a Core i7-920 with 12GB RAM), but that won’t cut it for “production use” and testing scenarios when SharePoint Server 2010 goes RTM.

Conclusion

If the past has taught me anything, it’s that additional needs and situations will arise that I haven’t anticipated. I’m relatively confident that the infrastructure I have in place will be a solid base for any “coming attractions,” though.

If you have any questions or wonder how I did something, feel free to ask! I can’t guarantee an answer (good or otherwise), but I do enjoy discussing what I’ve worked to build.

Additional Reading and References

- LinkedIn: Jason Ditzel

- Product: Gorilla Rack Shelves

- Networking: Cincinnati Bell DSL

- Networking: Current BPL

- Microsoft: System Center Data Protection Manager

- Dell: PowerEdge Servers

- Microsoft: Hyper-V Getting Started Guide

- Movie: The Matrix

- Gaming: Fallout Site

- Gaming: American McGee’s Alice

- Gaming: Warhammer BattleMech

- Microsoft: Forefront Threat Management Gateway 2010

- Microsoft: Internet Security & Acceleration Server 2006

Thanks for giving the rest of the world a peek into your “basement datacenter” setup. Very interesting to see the extent, and a little history, some of us computer geeks go to get real hands on experience with products and processes. I am humbled and jealous all at once. Good luck with the virtualization and server reduction efforts.

Thanks for taking the time to comment, Brian! The virtualization work will probably be a little later this year (I’m thinking 2nd half), but the new TMG server is something I hope to start tonight. The hardware arrived a couple of days ago, but SPS Indy kept me relatively busy until now. I hope it’s fun, but I’m confident it’ll be instructional if nothing else!

This is great stuff! Configuring servers has always been fun to me and I’m itching to get a little Hyper-V playground going, just to stay current. A self-hosted source control and build server would be awesome, as well. Perhaps one of these days. :)

Good luck with your upgrades!

Thanks for posting this! I really enjoyed reading it and it’s great to finally get to see your datacenter. In fact, I was even able to convince my wife to read it. I think it gave her an even greater appreciation for why people who are passionate about their profession and craft would set up such elaborate home datacenters; that’s priceless. Thanks again!

David, Paul: thanks for your comments!

One of the Hyper-V VMs I have running, David, is a TFS server for source control; the other half of my “professional life” is spent doing development, and Hyper-V has made it easy to try things out I might not otherwise be inclined to spend time on. Good luck with the playground you’re envisioning!

Paul, I’m glad to hear that the post might have given you some “ammo.” I was speaking with someone else about that very topic this weekend, and he indicated that he might drag his wife to the screen and say, “See, Honey — it could *definitely* be much worse than what I’ve got” :-)

Love it! It’s nice to see someone also *not* going the route of a rackmounted configuration and setup. Interesting that loss of powersupplies is the top failure. In my experience it’s disk failures in the RAID arrays. Only had two power supplies blow up (yes, with a loud bang) on me.

Thanks Stefan! You have an incredibly cool looking setup yourself — very impressive. With 125TBs of storage, it’s not hard to understand why drive failure would be your number one source of problems :-)

I’ve had a few drives go out over the years. To tell you the truth, I’m surprised I haven’t experienced more failures. I’m not going to look a gift horse in the mouth, though.

I appreciate your feedback!