I first presented (in some organized capacity) on SharePoint’s platform caching capabilities at SharePoint Saturday Ozarks in June of 2010, and since that time I’ve consistently received a growing number of questions on the topic of SharePoint BLOB caching. When I start talking about BLOB caching itself, the area that seems to draw the greatest number of questions and “really?!?!” responses is the use of the max-age attribute and how it can profoundly impact client-server interactions.

I’d been promising a number of people (including Todd Klindt and Becky Bertram) that I would write a post about the topic sometime soon, and recently decided that I had lollygagged around long enough.

Before I go too far, though, I should probably explain why the max-age attribute is so special … and even before I do that, we need to agree on what “caching” is and does.

Caching 101

Why does SharePoint supply caching mechanisms? Better yet, why does any application or hardware device employ caching? Generally speaking, caching is utilized to improve performance by taking frequently accessed data and placing it in a state or location that facilitates faster access. Faster access is commonly achieved through one or both of the following mechanisms:

- By placing the data that is to be accessed on a faster storage medium; for example, taking frequently accessed data from a hard drive and placing it into memory.

- By placing the data that is to be accessed closer to the point of usage; for example, offloading files from a server that is halfway around the world to one that is local to the point of consumption to reduce round-trip latency and bandwidth concerns. For Internet traffic, this scenario can be addressed with edge caching through a content delivery network such as that which is offered by Akamai’s EdgePlatform.

Oftentimes, data that is cached is expensive to fetch or computationally calculate. Take the digits in pi (3.1415926535 …) for example. Computing pi to 100 decimals requires a series of mathematical operations, and those operations take time. If the digits of pi are regularly requested or used by an application, it is probably better to compute those digits once and cache the sequence in memory than to calculate it on-demand each time the value is needed.

Caching usually improves performance and scalability, and these ultimately tend to translate into a better user experience.

SharePoint and caching

Through its publishing infrastructure, SharePoint provides a number of different platform caching capabilities that can work wonders to improve performance and scalability. Note that yes, I did say “publishing infrastructure” – sorry, I’m not talking about Windows SharePoint Services 3 or SharePoint Foundation 2010 here.

With any paid version of SharePoint, you get object caching, page output caching, and BLOB caching. With SharePoint 2010 and the Office Web Applications, you also get the Office Web Applications Cache (for which I highly recommend this blog post written by Bill Baer).

Each of these caching mechanisms and options work to improve performance within a SharePoint farm by using a combination of the two mechanisms I described earlier. Object caching stores frequently accessed property, query, and navigational data in memory on WFEs. Basic BLOB caching copies images, CSS, and similar resource data from content databases to the file system of WFEs. Page output caching piggybacks on ASP.NET page caching and holds SharePoint pages (which are expensive to render) in memory and serves them back to users. The Office Web Applications Cache stores the output of Word documents and PowerPoint presentations (which is expensive to render in web-accessible form) in a special site collection for subsequent re-use.

Public-facing SharePoint

Each of the aforementioned caching mechanisms yields some form of performance improvement within the SharePoint farm by reducing load or processing burden, and that’s all well and good … but do any of them improve performance outside of the SharePoint farm?

What do I even mean by “outside of the SharePoint farm?” Well, consider a SharePoint farm that serves up content to external consumers – a standard/typical Internet presence web site. Most of us in the SharePoint universe have seen (or held up) the Hawaiian Airlines and Ferrari websites as examples of what SharePoint can do in a public-facing capacity. These are exactly the type of sites I am focused on when I ask about what caching can do outside of the SharePoint farm.

For companies that host public-facing SharePoint sites, there is almost always a desire to reduce load and traffic into the web front-ends (WFEs) that serve up those sites. These companies are concerned with many of the same performance issues that concern SharePoint intranet sites, but public-facing sites have one additional concern that intranet sites typically don’t: Internet bandwidth.

Even though Internet bandwidth is much easier to come by these days than it used to be, it’s not unlimited. In the age of gigabit Ethernet to the desktop, most intranet users don’t think about (nor do they have to concern themselves with) the actual bandwidth to their SharePoint sites. I can tell you from experience that such is not the case when serving up SharePoint sites to the general public

So … for all the platform caching options that SharePoint has, is there anything it can actually do to assist with the Internet bandwidth issue?

Enter BLOB caching and the max-age attribute

As it turns out, the answer to that question is “yes” … and of course, it centers around BLOB caching and the max-age attribute specifically. Let’s start by looking at the <BlobCache /> element that is present in every SharePoint Server 2010 web.config file.

BLOB caching disabled

[sourcecode language=”xml”]

<BlobCache location="C:\BlobCache\14" path="\.(gif|jpg|jpeg|jpe|jfif|bmp|dib|tif|tiff|ico|png|wdp|hdp|css|js|asf|avi|flv|m4v|mov|mp3|mp4|mpeg|mpg|rm|rmvb|wma|wmv)$" maxSize="10" enabled="false" />

[/sourcecode]

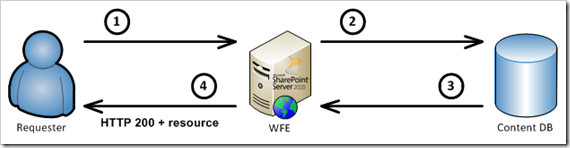

This is the default <BlobCache /> element that is present in all starting SharePoint Server 2010 web.config files, and astute readers will notice that the enabled attribute has a value of false. In this configuration, BLOB caching is turned off and every request for BLOB resources follows a particular sequence of steps. The first request in a browser session looks like this:

In this series of steps

- A request for a BLOB resource is made to a WFE

- The WFE fetches the BLOB resource from the appropriate content database

- The BLOB is returned to the WFE

- The WFE returns an HTTP 200 status code and the BLOB to the requester

Here’s a section of the actual HTTP response from server (step #4 above):

[sourcecode highlight=”2″]

HTTP/1.1 200 OK

Cache-Control: private,max-age=0

Content-Length: 1241304

Content-Type: image/jpeg

Expires: Tue, 09 Nov 2010 14:59:39 GMT

Last-Modified: Wed, 24 Nov 2010 14:59:40 GMT

ETag: "{9EE83B76-50AC-4280-9270-9FC7B540A2E3},7"

Server: Microsoft-IIS/7.5

SPRequestGuid: 45874590-475f-41fc-adf6-d67713cbdc85

[/sourcecode]

You’ll notice that I highlighted the Cache-Control header line. This line gives the requesting browser guidance on what it should and shouldn’t do with regard to caching the BLOB resource (typically an image, CSS file, etc.) it has requested. This particular combination basically tells the browser that it’s okay to cache the resource for the current user, but the resource shouldn’t be shared with other users or outside the current session.

Since the browser knows that it’s okay to privately cache the requested resource, subsequent requests for the resource by the same user (and within the same browser session) follow a different pattern:

When the browser makes subsequent requests like this for the resource, the HTTP response (in step #2) looks different than it did on the first request:

[sourcecode]

HTTP/1.1 304 NOT MODIFIED

Cache-Control: private,max-age=0

Content-Length: 0

Expires: Tue, 09 Nov 2010 14:59:59 GMT

[/sourcecode]

A request is made and a response is returned, but the HTTP 304 status code indicates that the requested resource wasn’t updated on the server; as a result, the browser can re-use its cached copy. Being able to re-use the cached copy is certainly an improvement over re-fetching it, but again: the cached copy is only used for the duration of the browser session – and only for the user who originally fetched it. The requester also has to contact the WFE to determine that the cached copy is still valid, so there’s the overhead of an additional round-trip to the WFE for each requested resource anytime a page is refreshed or re-rendered.

BLOB caching enabled

Even if you’re not a SharePoint administrator and generally don’t poke around web.config files, you can probably guess at how BLOB caching is enabled after reading the previous section. That’s right: it’s enabled by setting the enabled attribute to true as follows:

[sourcecode language=”xml”]

<BlobCache location="C:\BlobCache\14" path="\.(gif|jpg|jpeg|jpe|jfif|bmp|dib|tif|tiff|ico|png|wdp|hdp|css|js|asf|avi|flv|m4v|mov|mp3|mp4|mpeg|mpg|rm|rmvb|wma|wmv)$" maxSize="10" enabled="true" />

[/sourcecode]

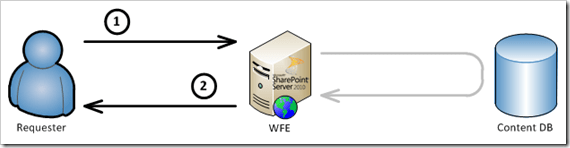

When BLOB caching is enabled in this fashion, the request pattern for BLOB resources changes quite a bit. The first request during a browser session looks like this:

In this series of steps

- A request for a BLOB resource is made to a WFE

- The WFE returns the BLOB resource from a file system cache

The gray arrow that is shown indicates that at some point, an initial fetch of the BLOB resource is needed to populate the BLOB cache in the file system of the WFE. After that point, the resource is served directly from the WFE so that subsequent requests are handled locally for the duration of the browser session.

As you might imagine based on the interaction patterns described thus far, simply enabling the BLOB cache can work wonders to reduce the load on your SQL Servers (where content databases are housed) and reduce back-end network traffic. Where things get really interesting, though, is on the client side of the equation (that is, the Requester’s machine) once a resource has been fetched.

What about the max-age attribute?

You probably noticed that a max-age attribute wasn’t specified in the default (previous) <BlobCache /> element. That’s because the max-age is actually an optional attribute. It can be added to the <BlobCache /> element in the following fashion:

[sourcecode language=”xml”]

<BlobCache location="C:\BlobCache\14" path="\.(gif|jpg|jpeg|jpe|jfif|bmp|dib|tif|tiff|ico|png|wdp|hdp|css|js|asf|avi|flv|m4v|mov|mp3|mp4|mpeg|mpg|rm|rmvb|wma|wmv)$" maxSize="10" enabled="true" max-age=”43200” />

[/sourcecode]

Before explaining exactly what the max-age attribute does, I think it’s important to first address what it doesn’t do and dispel a misconception that I’ve seen a number of times. The max-age attribute has nothing to do with how long items stay within the BLOB cache on the WFE’s file system. max-age is not an expiration period or window of viability for content on the WFE. The server-side BLOB cache isn’t like other caches in that items expire out of it. New assets will replace old ones via a maintenance thread that regularly checks associated site collections for changes, but there’s no regular removal of BLOB items from the WFE’s file system BLOB cache simply because of age. max-age has nothing to do with server side operations.

So, what does the max-age attribute actually do then? Answer: it controls information that is sent to requesters for purposes of specifying how BLOB items should be cached by the requester. In short: max-age controls client-side cacheability.

The effect of the max-age attribute

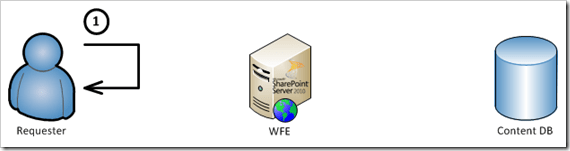

max-age values are specified in seconds; in the case above, 43200 seconds translates into 12 hours. When a max-age value is specified for BLOB caching, something magical happens with BLOB requests that are made from client browsers. After a BLOB cache resource is initially fetched by a requester according to the previous “BLOB caching enabled” series of steps, subsequent requests for the fetched resource look like this for a period of time equal to the max-age:

You might be saying, “hey, wait a minute … there’s only one step there. The request doesn’t even go to the WFE?” That’s right: the request doesn’t go to the WFE. It gets served directly from local browser cache – assuming such a cache is in use, of course, which it typically is.

Why does this happen? Let’s take a look at the HTTP response that is sent back with the payload on the initial resource request when BLOB caching is enabled:

[sourcecode highlight=”2″]

HTTP/1.1 200 OK

Cache-Control: public, max-age=43200

Content-Length: 1241304

Content-Type: image/jpeg

Last-Modified: Thu, 22 May 2008 21:26:03 GMT

Accept-Ranges: bytes

ETag: "{F60C28AA-1868-4FF5-A950-8AA2B4F3E161},8pub"

Server: Microsoft-IIS/7.5

SPRequestGuid: 45874590-475f-41fc-adf6-d67713cbdc85

[/sourcecode]

The Cache-Control header line in this case differs quite a bit from the one that was specified when BLOB caching was disabled. First, the use of public instead of private tells the receiving browser or application that the response payload can be cached and made available across users and sessions. The response header max-age attribute maps directly to the value specified in the web.config, and in this case it basically indicates that the payload is valid for 12 hours (43,200 seconds) in the cache. During that 12 hour window, any request for the payload/resource will be served directly from the cache without a trip to the SharePoint WFE.

Implications that come with max-age

On the plus side, serving resources directly out of the client-side cache for a period of time can dramatically reduce requests and overall traffic to WFEs. This can be a tremendous bandwidth saver, especially when you consider that assets which are BLOB cached tend to be larger in nature – images, media files, etc. At the same time, serving resources directly out of the cache is much quicker than round-tripping to a WFE – even if the round trip involves nothing more than an HTTP 304 response to say that a cached resource may be used instead of being retrieved.

While serving items directly out of the cache can yield significant benefits, I’ve seen a few organizations get bitten by BLOB caching and excessive max-age periods. This is particularly true when BLOB caching and long max-age periods are employed in environments where images and other BLOB cached resources are regularly replaced and changed-out. Let me illustrate with an example.

Suppose a site collection that hosts collaboration activities for a graphic design group is being served through a Web application zone where BLOB caching is enabled and a max-age period of 43,200 seconds (12 hours) is specified. One of the designers who uses the site collection arrives in the morning, launches her browser, and starts doing some work in the site collection. Most of the scripts, CSS, images, and other BLOB assets that are retrieved will be cached by the user’s browser for the rest of the work day. No additional fetches for such assets will take place.

In this particular scenario, caching is probably a bad thing. Users trying to collaborate on images and other similar (BLOB) content are probably going to be disrupted by the effect of BLOB caching. The max-age value (duration) in-use would either need to be dialed-back significantly or BLOB caching would have to be turned-off entirely.

What you don’t see can hurt you

There’s one more very important point I want to make when it comes to BLOB caching and the use of the max-age attribute: the default <BlobCache /> element doesn’t come with a max-age attribute value, but that doesn’t mean that there isn’t one in-use. If you fail to specify a max-age attribute value, you end up with the default of 86,400 seconds – 24 hours.

This wasn’t always the case! In some recent exploratory work I was doing with Fiddler, I was quite surprised to discover client-side caching taking place where previously it hadn’t. When I first started playing around with BLOB caching shortly after MOSS 2007 was released, omitting the max-age attribute in the <BlobCache /> element meant that a max-age value of zero (0) was used. This had the effect of caching BLOB resources in the file system cache on WFEs without those resources getting cached in public, cross-session form on the client-side. To achieve extended client-side caching, a max-age value had to be explicitly assigned.

Somewhere along the line, this behavior was changed. I’m not sure where it happened, and attempts to dig back through older VM images (for HTTP response comparisons) didn’t give me a read on when Microsoft made the change. If I had to guess, though, it probably happened somewhere around service pack 1 (SP1). That’s strictly a guess, though. I had always gotten into the habit of explicitly including a max-age value – even if it was zero – so it wasn’t until I was playing with the BLOB caching defaults in a SharePoint 2010 environment that I noticed the 24 hour client-side caching behavior by default. I then backtracked to verify that the behavior was present in both SharePoint 2007 and SharePoint 2010, and it affected both authenticated and anonymous users. It wasn’t a fluke.

So watch-out: if you don’t specify a max-age value, you’ll get 24 hour client-side caching by default! If users complain of images that “won’t update” and stale BLOB-based content, look closely at max-age effects.

An alternate viewpoint on the topic

As I was finishing up this post, I decided that it would probably be a good idea to see if anyone else had written on this topic. My search quickly turned up Chris O’Brien’s “Optimization, BLOB caching and HTTP 304s” post which was written last year. It’s an excellent read, highly informative, and covers a number of items I didn’t go into.

Throughout this post, I took the viewpoint of a SharePoint administrator who is seeking to control WFE load and Internet bandwidth consumption. Chris’ post, on the other hand, was written primarily with developer and end-user concerns in mind. I wasn’t aware of some of the concerns that Chris points out, and I learned quite a few things while reading his write-up. I highly recommend checking out his post if you have a moment.

Additional Reading and References

- Event: SharePoint Saturday Ozarks (June 2010)

- Blob Post: We Drift Deeper Into the Sound … as the (BLOB Cache) Flush Comes

- Blog: Todd Klindt’s SharePoint Admin Blog

- Blog: Becky Bertram’s Blog

- Definition: lollygag

- Technology: Akamai’s EdgePlatform

- Wikipedia: Pi

- TechNet: Cache settings operations (SharePoint Server 2010)

- Bill Baer: The Office Web Applications Cache

- SharePoint Site: Hawaiian Airlines

- SharePoint Site: Ferrari

- W3C Site: Cache-Control explanations

- Tool: Fiddler

- Blog Post: Chris O’Brien: Optimization, BLOB caching and HTTP 304s

Like this:

Like Loading...

The relative peace and tranquility I normally experience was interrupted by none other than SP1. Like a bull in a china shop, SP1 announced that it had arrived on the scene with the dialog seen on the right. What struck me as weird is that all I had done up until the point when the dialog started appearing was the following:

The relative peace and tranquility I normally experience was interrupted by none other than SP1. Like a bull in a china shop, SP1 announced that it had arrived on the scene with the dialog seen on the right. What struck me as weird is that all I had done up until the point when the dialog started appearing was the following: Since this was my development environment and Visual Studio 2010 was installed, I decided to attach the VS debugger to the SharePoint Timer Service process and see if I could learn anything more. The dialog on the left was the result.

Since this was my development environment and Visual Studio 2010 was installed, I decided to attach the VS debugger to the SharePoint Timer Service process and see if I could learn anything more. The dialog on the left was the result. I had trouble believing that I would have gone through the trouble of configuring the UPS in my development environment, so I decided to take a look in Central Administration. Much to my surprise, I had actually taken the time to jump through all of the hoops (as shown on the right) to get the service running, AD synchronization going, etc.

I had trouble believing that I would have gone through the trouble of configuring the UPS in my development environment, so I decided to take a look in Central Administration. Much to my surprise, I had actually taken the time to jump through all of the hoops (as shown on the right) to get the service running, AD synchronization going, etc. Remember: this is a VM that we’re talking about. Since I try to practice what I preach (particularly from a backup and restore perspective), I had taken a VM snapshot prior to the start of SP1 work. I rolled-back to my pre-SP1 state and took a different approach to the service pack application.

Remember: this is a VM that we’re talking about. Since I try to practice what I preach (particularly from a backup and restore perspective), I had taken a VM snapshot prior to the start of SP1 work. I rolled-back to my pre-SP1 state and took a different approach to the service pack application. I deleted my UPS service application instance and decided to have a look at the FIM service again. What I saw (on the left) looked a bit different. Rather than being set to auto-start (“Automatic”), both the FIM Service and its associated synchronization service were set to “Disabled.”

I deleted my UPS service application instance and decided to have a look at the FIM service again. What I saw (on the left) looked a bit different. Rather than being set to auto-start (“Automatic”), both the FIM Service and its associated synchronization service were set to “Disabled.”